Falco Feeds extends the power of Falco by giving open source-focused companies access to expert-written rules that are continuously updated as new threats are discovered.

In this blogpost we will demonstrate how to build a complete GKE security stack for anomaly detection and to prevent container runtime security threats. We will integrate Falco runtime security engine with Google Cloud Functions and Pub/Sub.

This GKE security stack is composed of two different deployments:

- Kubernetes Falco agents: You need to install Falco in your cluster to collect, directly from the Kubernetes nodes, the runtime security events and detect anomalous behavior.

- Serverless / Cloud playbooks: A set of Google Cloud Functions that will execute security playbooks (like killing or isolating a suspicious pod) when triggered.

These two pieces will communicate using Google Pub/Sub.

Before describing the complete GKE security stack, let's learn more about the building blocks that we intend to use.

Introducing Falco for GKE security.

Falco is an open source project for container security for Cloud Native platforms such as Kubernetes. Originally developed at Sysdig, it is now an independent project under the CNCF umbrella.

Leveraging Sysdig's open source Linux kernel instrumentation, Falco gains deep insight into system behavior. The rules engine can then detect abnormal activity and runtime security threats in applications, containers, the underlying host, and the container platform itself.

The Falco engine dynamically loads a set of default and user-defined security rules described using YAML files. This would be a token example of a Falco runtime security rule targeting containers:

- rule: Terminal shell in container

desc: A shell was used as the entrypoint/exec point into a container with an attached terminal.

condition: >

spawned_process and container

and shell_procs and proc.tty != 0

and container_entrypoint

output: >

A shell was spawned in a container with an attached terminal (user=%user.name %container.info

shell=%proc.name parent=%proc.pname cmdline=%proc.cmdline terminal=%proc.tty)

priority: NOTICE

tags: [container, shell]

The rule above will detect and notify any attempt to attach a terminal shell in to a running container. This shouldn't happen (or at least not in the production, user-facing infrastructure). It is out of scope for this article to fully explain the falco rule format or capabilities, so we are just going to use the default security ruleset. We just wanted to let you know that you can create and customize your own container security rules if you want to.

Also note that Falco is not just using the kernel instrumentation datasource, but can also consume security-related events from other runtime sources, like the Kubernetes Audit Log. This will be an example of a Falco rule designed to detect unwanted Kubernetes ClusterRole tampering:

- rule: System ClusterRole Modified/Deleted

desc: Detect any attempt to modify/delete a ClusterRole/Role starting with system

condition: kevt and (role or clusterrole) and (kmodify or kdelete) and (ka.target.name startswith "system:") and ka.target.name!="system:coredns"

output: System ClusterRole/Role modified or deleted (user=%ka.user.name role=%ka.target.name ns=%ka.target.namespace action=%ka.verb)

priority: WARNING

source: k8s_audit

tags: [k8s]

GKE security stack: Falco, Pub/Sub and Cloud Functions.

In order to extend Falco, we are going to integrate two Google Cloud technologies as part of our stack: Google Cloud Functions and Google Cloud Pub/Sub.

Google Cloud Functions is a lightweight compute solution for developers to create single-purpose, stand-alone functions that respond to Cloud events without the need to manage a server or runtime environment. We will use this serverless approach to implement security playbooks, in other words, automated remediation actions based on the event data and container metadata coming from the Falco engine.

Asynchronous communication between a set of producers and consumers requires an efficient and reliable messaging middleware. These solutions are commonly known as Publish/Subscribe messaging (PubSub). By using Google Cloud Pub/Sub we can abstract away all the complexities associated with the communication of our two major building blocks.

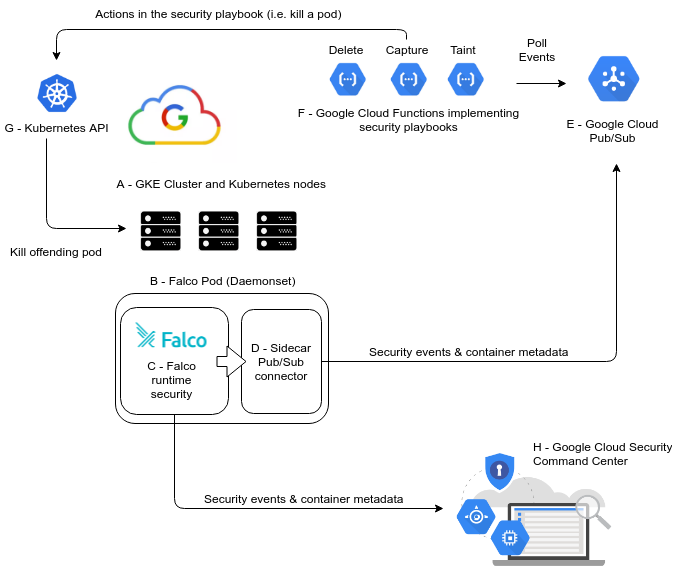

We can now put everything together using the following diagram:

Listed by alphabetical order:

A – GKE cluster and Kubernetes nodes

This is the Kubernetes cluster that you want to monitor and secure. This is where the entire GKE security workflow starts (detecting a security threat) and ends (performing a remediation action).

B – Falco deployment using a DaemonSet

Falco will be deployed as a Kubernetes DaemonSet, which means that you will have a Falco pod Running in each Kubernetes node. This pod is composed of two containers "C" and "D".

C – Falco Cloud Native runtime security engine

The Falco security engine that we described above running inside a container. It will keep monitoring the host and container activity and forwarding the triggered security events to container "D".

Let's take a look at this security event message generated by Falco:

{

"output":"17:10:29.724747835: Notice A shell was spawned in a container with an attached terminal (user=root nginx1 (id=06b29170462e) shell=bash parent= cmdline=bash terminal=34816)",

"priority":"Notice",

"rule":"Terminal shell in container",

"time":"2019-03-28T17:10:29.724747835Z",

"output_fields":{

"container.id":"06b29170462e",

"container.name":"nginx",

"evt.time":1553793029724747835,

"proc.cmdline":"bash ",

"proc.name":"bash",

"proc.pname":null,

"proc.tty":34816,

"user.name":"root"

}

}

We have all the relevant pieces of information that we need:

- The security rule that was fired

- Affected container (and its ID)

- Timestamp for the event

- Trespassing user and process id

These events are then passed to the connector sidecar container "D".

D – Falco Pub/Sub sidecar connector

This container receives and processes the JSON payloads for the security events before sending them to the Google Cloud Pub/Sub queue.

E – Google Cloud Pub/Sub message broker

This middleware piece manages the connection between the Falco event emitters (B) and the serverless function consumers (F).

Thanks to this mediation we can replace any of the two parts separately, event if the other end is not yet ready to send or accept messages and also enables us to plug different event consumers with different purposes in the future, if we want to do so.

F – Security playbooks implemented as Google Cloud Functions

The Google Cloud Functions bring us the opportunity to implement security functions without worrying about supporting infrastructure or maintenance.

They can implement different security playbooks:

- Kill the offending pod

- Create a Sysdig capture that will allow you to perform advanced forensics over the security incident

- Isolate the pod from the network

- Forward a Slack notification

- Taint the node where the security event manifested

- … more to come.

These functions are subscribed to a PubSub topic, their operational workflow can be summarized as:

- The PubSub subscription hook notifies the function(s) whenever there is a new security event waiting in the pipe

- Every individual function parses the event and decides whether to fire the response depending on the event type and container metadata

- If the function is triggered, it will contact the Kubernetes API (H) and perform the configured remediation actions

G – Kubernetes API server

GKE provides a Kubernetes API endpoint that will authorize and accept the Google Cloud function request. This way the actions will be executed in the cluster (ie, the offending pod will be effectively killed), closing the cycle.

H – Google Cloud Security Command Center

The Falco security engine can also forward the security events and alerts once they are translated into Google Cloud Security Command Center findings. With just this simple integration, GCSCC will double down as the SIEM for your Falco runtime engine.

How to implement GKE security with Falco.

Enough theory! Let's install the GKE security stack already.

You need to have:

- A Kubernetes cluster, created using the Google Kubernetes Engine

- The

gcloudcli tool, initialized and configured to connect to your Google Cloud account - The

kubectlcli tool configured to interact with your GKE Kubernetes cluster - A

terraformavailable if you are using the automation we have developed to quickly create the Google Pub/Sub topic - A clone of the Kubernetes Response Engine repository

Creating needed infrastructure on GKE

In Sysdig we are people obsessed with the automation. In this case, creating a Pub/Sub topic is not a big deal, but we crafted a Terraform manifest to automate this task.

First of all, clone the repository:

$ git clone https://github.com/falcosecurity/kubernetes-response-engine.git

Next step is to access the deployment directory and choose the google-clouddirectory and just type makefor deploying the Pub/Sub topic.

$ cd kubernetes-response-engine/deployment/google-cloud

$ make

Of course, you will need to specify the Google Cloud project required settings. Our recommendation is to use the environment variables and with something like direnv you can keep the values for further references. If you feel more comfortable using the Google Cloud Console tool, it also will work fine.

Installing Falco on GKE

As we mentioned before, this software stack is composed of two main building blocks. We will start installing the software living inside the cluster, the Falco DaemonSet.

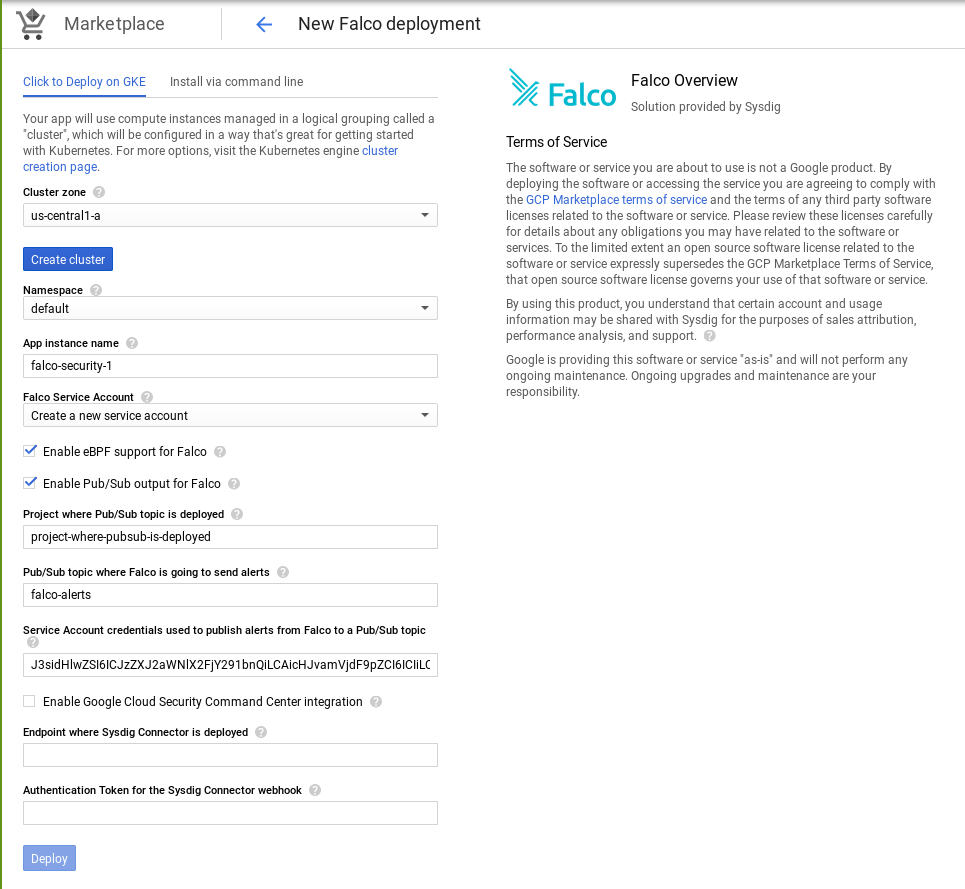

We have several options to deploy Falco on GKE but this time, we want to do it using the Google Marketplace. Once we access to the Marketplace and select the Falco Kubernetes application, we can configure Falco to be deployed in our GKE cluster:

We need to put attention on the following fields:

Enable Pub/Sub output for Falco: It must be enabled in order to allow Falco to send alerts to Pub/Sub.Pub/Sub topic where Falco is going to send alerts: name for the PubSub topic that will connect Falco with the Google Cloud Functions, you can use any name, for examplefalco-pubsub, remember the name you use here, because you will need to connect the functions to the same topic.Project where Pub/Sub topic is deployed: The Google Cloud project that is hosting your Kubernetes cluster, you can check the project ID from your Google Cloud web console.Service Account credentials used to publish aerts from Falco to a Pub/Sub topic:In order to authenticate with several google cloud services, we need to provide an identity. In this case we need authorization to send messages to a Pub/Sub topic. You can get more information about how to create and manage service accounts keys in Google Cloud Documentation. Once you have the JSON file, you can copy it in the field or if you prefer you can encode it with base64 and copy its content in the field.

A few minutes after deploying Falco, you will be able to see their pods running (one per node):

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

sysdig-falco-1-mwv2j 2/2 Running 1 2m

sysdig-falco-1-t2prc 2/2 Running 1 2m

sysdig-falco-1-zldz2 2/2 Running 1 2m

As you can see, there are two containers per pod (READY column). The in-cluster part is ready, now let's deploy the serverless entities.

Deploying security playbooks for GKE as Google Cloud Functions

As long as we already cloned the kubernetes-response-engine repository, we need to change the directory to the playbooks one:

$ cd kubernetes-response-engine/playbooks

You can list the different serverless functions available in this repository:

$ ls functions/

capture.py delete.py demisto.py isolate.py phantom.py slack.py taint.py

So we are going to deploy one of these functions to check the operation of our GKE security stack. You can see a full list of the available functions with their purpose and input parameters here.

The function we are going to deploy is the deleteone. We provide a shell script that will take care of this, you only have to type one command:

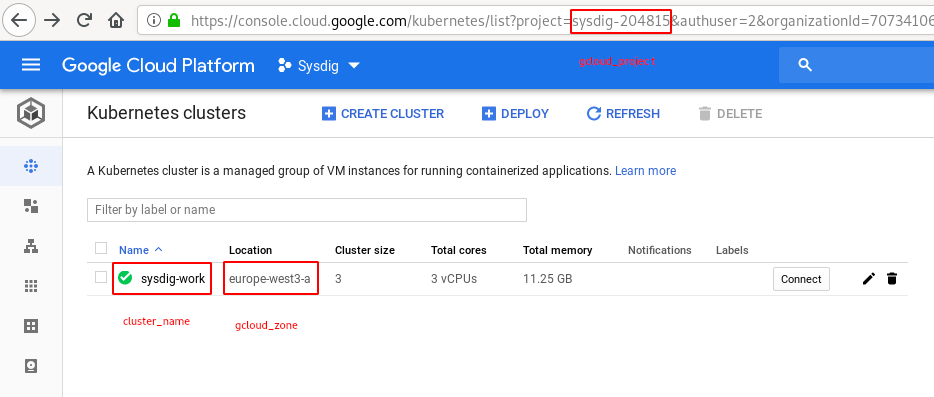

$ ./deploy_playbook_gke -p delete -t falco-alerts -s falco.notice.terminal_shell_in_container -k -z -n

cluster_name: The Kubernetes cluster name which we are going to securize.gcloud_zone: The Google Cloud default zone to manage resources in. You can use the same zone that the one where your Kubernetes cluster is deployed,gcloud_project:The Google Cloud project that is hosting your Kubernetes cluster, you can check the project ID from your Google Cloud web console.

You can find all these fields in your Google Cloud Console:

And when you run the script you should see something like:

Fetching cluster endpoint and auth data.

kubeconfig entry generated for sysdig-work.

Deploying function (may take a while - up to 2 minutes)...done.

availableMemoryMb: 256

entryPoint: handler

environmentVariables:

KUBECONFIG: kubeconfig

KUBERNETES_LOAD_KUBE_CONFIG: '1'

SUBSCRIBED_ALERTS: falco.notice.terminal_shell_in_container

eventTrigger:

eventType: google.pubsub.topic.publish

failurePolicy: {}

resource: projects//topics/falco-alerts

service: pubsub.googleapis.com

labels:

deployment-tool: cli-gcloud

name: projects//locations/us-central1/functions/delete

runtime: python37

serviceAccountEmail: @appspot.gserviceaccount.com

sourceUploadUrl: [...]

status: ACTIVE

timeout: 60s

updateTime: '2019-04-10T15:17:24Z'

versionId: '1'

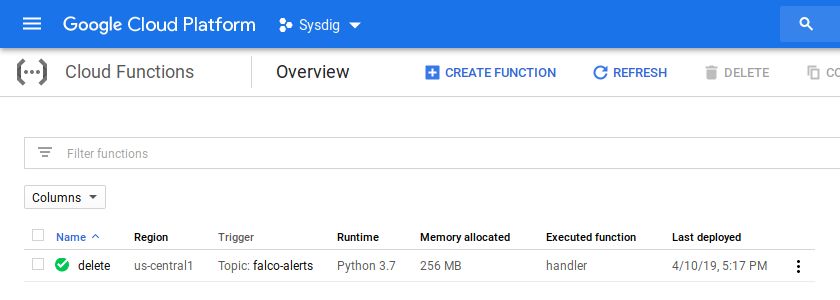

And that's all, you should be able to see it in Google Console:

GKE security playbooks in action!

To test these functions, you can create a random victim pod:

$ kubectl run --generator=run-pod/v1 nginx-falco --image=nginx

pod/nginx-falco created

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-falco 1/1 Running 0 5s

Now, we simulate one of the security incidents spawning an interactive shell in this container:

$ kubectl exec -it nginx-falco bash

root@nginx-falco:/# command terminated with exit code 137

As you can see, the bash process and the pod itself were terminated.

Let's check the logs produced by the Falco engine:

$ kubectl logs -l role=security -c falco | grep "nginx-falco"

{"output":"16:16:47.908565738: Notice A shell was spawned in a container with an attached terminal (user=root k8s.pod=nginx-falco container=0042056722bb shell=bash parent= cmdline=bash terminal=34816)","priority":"Notice","rule":"Terminal shell in container","time":"2019-04-01T16:16:47.908565738Z", "output_fields": {"container.id":"0042056722bb","evt.time":1554135407908565738,"k8s.pod.name":"nginx-falco","proc.cmdline":"bash ","proc.name":"bash","proc.pname":null,"proc.tty":34816,"user.name":"root"}}

Now, click on your function name (delete) and check the Logs associated to the function execution:

Our GKE security pipeline is working and we have full logs for the entire operation!

GKE Hackers, welcome to Falco :)

To avoid making this post too extensive we have just used the default set of Falco rules, and the simple "terminal shell in container" example. But there is so much more you can do with the Falco engine:

- Targeting specific CVEs shortly after they have been published, immediately protecting your infrastructure.

- Parsing the Kubernetes audit log to detect abnormal operations at the cluster level, performed by the Kubernetes users or a service account related to a software entity.

- Write your own security playbooks extending the playbooks library and functions available in the Falco repository and deploy them as serverless functions.

If you have made any modifications to this GKE security stack or are just using it and would like to share some feedback, we would love to hear about your experience!