Falco Feeds extends the power of Falco by giving open source-focused companies access to expert-written rules that are continuously updated as new threats are discovered.

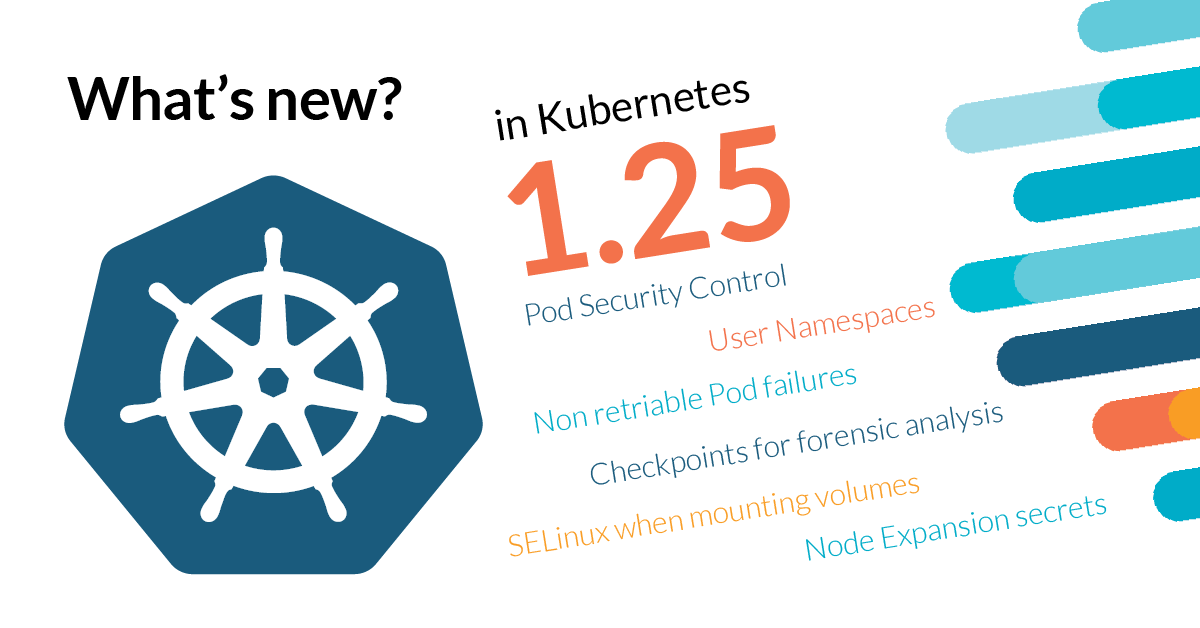

Kubernetes 1.25 is about to be released, and it comes packed with novelties! Where do we begin?

This release brings 40 enhancements, on par with the 46 in Kubernetes 1.24 and 56 in Kubernetes 1.22. Of those 40 enhancements, 8 are graduating to Stable, 21 are existing features that keep improving, and 11 are coming with innovative changes.

Pod Security Policy (PSP) Removal

One of the most important changes in Kubernetes 1.25 is the removal of Pod Security Policy (PSP). PSP was deprecated since Kubernetes 1.21 and has been the subject of many debates within the Kubernetes community.

Pod Security Standards is the replacement for PSP. PSS is simpler, easier to use, and less complex than its predecessor. It's built into Kubernetes core and uses labels and admission control to enforce pod security standards.

The removal of PSP is a significant milestone in the Kubernetes project, as it finally replaces a complex and controversial feature with a simpler and more intuitive alternative.

CRI v1 Stable

The Container Runtime Interface (CRI) v1 is now stable in Kubernetes 1.25. The CRI defines how Kubernetes interacts with container runtimes. This is an important milestone, as it ensures that all container runtime implementations follow a common interface.

API Removals and Deprecations

Kubernetes 1.25 removes many beta API versions that were already deprecated:

- The PodDisruptionBudget API v1beta1 is removed. Migrate to policy/v1

- The HorizontalPodAutoscaler API v2beta1 is removed. Migrate to autoscaling/v2

- The CronJob API v1beta1 is removed. Migrate to batch/v1

- The CustomResourceDefinition API v1beta1 is removed. Migrate to apiextensions.k8s.io/v1

- The priority class API v1beta1 is removed. Migrate to scheduling.k8s.io/v1

Deprecated APIs that will be removed in Kubernetes 1.26:

- Event v1beta1 (from events.k8s.io) - Migrate to v1

- FlowControl v1beta1 (from flowcontrol.apiserver.k8s.io) - Migrate to v1beta2

Image Registry and OCI Image Spec

Kubernetes 1.25 brings support for OCI image configuration. This means better interoperability with OCI-compliant image registries, as Kubernetes now recognizes OCI image metadata.

CSI Node Expansion Secret

The CSI (Container Storage Interface) Node expansion secret feature allows for CSI drivers to access secrets during node expansion. This is particularly useful when the CSI driver needs authentication credentials to complete the expansion operation.

Kubelet Windows Service Support

Kubelet can now run as a Windows service. This simplifies the deployment and management of Kubernetes on Windows nodes.

Windows Pod Priority and Preemption

Pod Priority and Preemption support for Windows pods is now generally available. This allows Windows pods to specify their priority levels and allows the scheduler to preempt lower-priority pods if needed.

Device Plugin Improvements

Device plugins, such as for GPUs and other accelerators, can now be installed and managed more easily in Kubernetes 1.25.

AppArmor Support

Kubernetes 1.25 adds support for AppArmor on Linux nodes. AppArmor is a Linux security module that can be used to restrict the capabilities of containers.

etcd Improvements

etcd improvements in Kubernetes 1.25 include:

- etcd version 3.5 is now the minimum required version

- etcd now supports cluster-scoped APIServer identity (improved security)

Load Balancer Class

A new LoadBalancerClass field on Service objects allows for more granular control over load balancer provisioning. This feature is helpful in multi-cluster environments where different load balancers are used for different services.

Ingress IngressClass Parameters

The Ingress API now supports parameters on IngressClass, enabling more sophisticated configuration options for ingress controllers.

Kubernetes API Server Events

Kubernetes 1.25 introduces structured events for the API server. This provides better visibility into API server operations and can be useful for debugging and monitoring.

PersistentVolumeClaimResizeStatus

The PersistentVolumeClaimResizeStatus field provides more detailed status information about PersistentVolumeClaim resize operations.

What's the catch? Is there any reason to worry?

Yes, Kubernetes 1.25 has several important changes to be aware of:

1- Pod Security Policy (PSP) removal - If your cluster uses PSP, you must switch to Pod Security Standards before upgrading to Kubernetes 1.25.

2- API v1beta1 removal - If your applications or operators use v1beta1 APIs that are being removed, you must migrate them to stable v1 APIs.

3- Implicit kubelet config files - Implicit kubelet configuration files are no longer allowed, and you must use the kubelet config API.

Summary

Kubernetes 1.25 is a significant release with several important changes:

- Pod Security Policy (PSP) is removed (PSS is the replacement)

- CRI v1 is now stable

- Many v1beta1 APIs are removed

- OCI image specification support is added

- CSI Node expansion secret support is added

- Kubelet Windows service support is added

- Windows pod priority and preemption support is added

- AppArmor support is added

- etcd version 3.5 is now required

- New load balancer and ingress improvements

To summarize, Kubernetes 1.25 requires careful planning for users who rely on Pod Security Policy or v1beta1 APIs. Plan your migration strategy accordingly and ensure your applications are compatible with the new APIs before upgrading.

Are you planning to upgrade to Kubernetes 1.25? Have you already transitioned from PSP to Pod Security Standards? Let us know in the comments section below!

For more insights about container and Kubernetes security, check out our Kubernetes security learning center.