24 Google Cloud Platform (GCP) security best practices

You’ve got a problem to solve and turned to Google Cloud Platform and follow GCP security best practices to build and host your solution. You create your account and are all set to brew some coffee and sit down at your workstation to architect, code, build, and deploy. Except… you aren’t.

There are many knobs you must tweak and practices to put into action if you want your solution to be operative, secure, reliable, performant, and cost effective. First things first, the best time to do that is now – right from the beginning, before you start to design and engineer.

What you'll learn

- Google Cloud Platform shared responsibility model

- Set up Google Cloud Platform security best practices

- GCP Security walkthrough

- Compliance Standards & Benchmarks

- Conclusion

Google Cloud Platform shared responsibility model

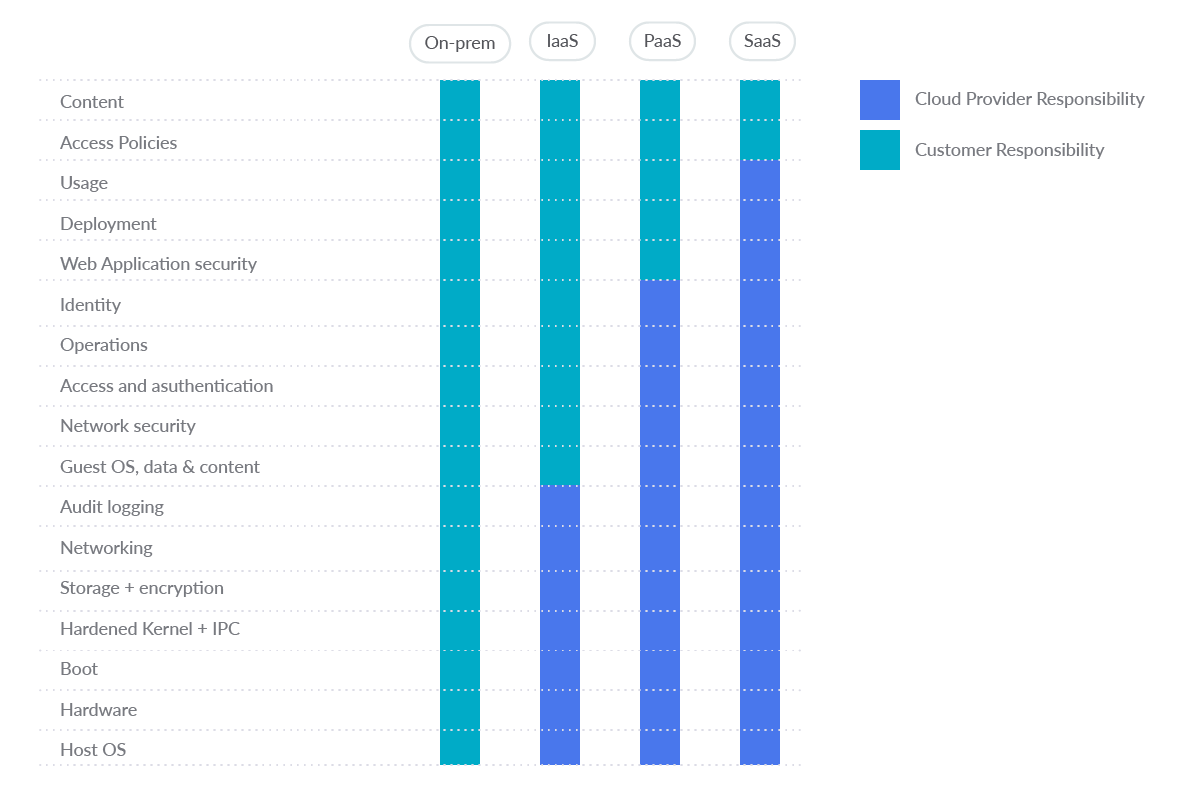

The scope of Google Cloud products and services ranges from conventional Infrastructure as a Service (IaaS) to Platform as a Service (PaaS) and Software as a Service (SaaS). As shown in the figure, the traditional boundaries of responsibility between users and cloud providers change based on the service they choose.

At the very least, as part of their common responsibility for security, public cloud providers need to be able to provide you with a solid and secure foundation. Also, providers need to empower you to understand and implement your own parts of the shared responsibility model.

Set up Google Cloud Platform security best practices

First, a word of caution: never use a non-corporate account.

Instead, use a fully managed corporate Google account to improve visibility, auditing, and control of access to Cloud Platform resources. Don't use email accounts outside of your organization, such as personal accounts, for business purposes.

Cloud Identity is a stand-alone Identity-as-a-Service (IDaaS) that gives Google Cloud users access to many of the identity management features that Google Workspace provides. It is a suite of secure cloud-native collaboration and productivity applications from Google. Through the Cloud Identity management layer, you can enable or disable access to various Google solutions for members of your organization, including Google Cloud Platform (GCP).

Signing up for Cloud Identity also creates an organizational node for your domain. This helps you map your corporate structure and controls to Google Cloud resources through the Google Cloud resource hierarchy.

Now, activating Multi-Factor Authentication (MFA) is the most important thing you want to do. Do this for every user account you create in your system if you want to have a security-first mindset, especially crucial for administrators. MFA, along with strong passwords, are the most effective way to secure user's accounts against improper access.

Now that you are set, let's dig into the GCP security best practices.

GCP Security walkthrough

In this section, we will walk through the most common GCP services and provide two dozen (we like dozens here) best practices to adopt for each.

Achieving Google Cloud Platform security best practices with Open Source – Cloud Custodian is a Cloud Security Posture Management (CSPM) tool. CSPM tools evaluate your cloud configuration and identify common configuration mistakes. They also monitor cloud logs to detect threats and configuration changes.

Now let's walk through service by service.

Identity and Access Management (IAM)

GCP Identity and Access Management (IAM) helps enforce least privilege access control to your cloud resources. You can use IAM to restrict who is authenticated (signed in) and authorized (has permissions) to use resources.

A few GCP security best practices you want to implement for IAM:

1. Check your IAM policies for personal email accounts

For each Google Cloud Platform project, list the accounts that have been granted access to that project:

gcloud projects get-iam-policy PROJECT_IDCode language: Perl (perl)

Also list the accounts added on each folder:

gcloud resource-manager folders get-iam-policy FOLDER_IDCode language: Perl (perl)

And list your organization's IAM policy:

gcloud organizations get-iam-policy ORGANIZATION_IDCode language: Perl (perl)

No email accounts outside the organization domain should be granted permissions in the IAM policies. This excludes Google owned service accounts.

By default, no email addresses outside the organization's domain have access to its Google Cloud deployments, but any user email account can be added to the IAM policy for Google Cloud Platform projects, folders, or organizations. To prevent this, enable Domain Restricted Sharing within the organization policy:

gcloud resource-manager org-policies allow --organization=ORGANIZATION_ID iam.allowedPolicyMemberDomains=DOMAIN_IDCode language: Perl (perl)

Here is a Cloud Custodian rule for detecting the use of personal accounts:

- name: personal-emails-used

description: |

Use corporate login credentials instead of personal accounts,

such as Gmail accounts.

resource: gcp.project

filters:

- type: iam-policy

key: "bindings[*].members[]"

op: contains-regex

value: .+@(?!organization.com|.+gserviceaccount.com)(.+.com)*Code language: Perl (perl)

2. Ensure that MFA is enabled for all user accounts

Multi-factor authentication requires more than one mechanism to authenticate a user. This secures user logins from attackers exploiting stolen or weak credentials. By default, multi-factor authentication is not set.

Make sure that for each Google Cloud Platform project, folder, or organization, multi-factor authentication for each account is set and, if not, set it up.

3. Ensure Security Key enforcement for admin accounts

GCP users with Organization Administrator roles have the highest level of privilege in the organization.

These accounts should be protected with the strongest form of two-factor authentication: Security Key Enforcement. Ensure that admins use Security Keys to log in instead of weaker second factors, like SMS or one-time passwords (OTP). Security Keys are actual physical keys used to access Google Organization Administrator Accounts. They send an encrypted signature rather than a code, ensuring that logins cannot be phished.

Identify users with Organization Administrator privileges:

gcloud organizations get-iam-policy ORGANIZATION_IDCode language: Perl (perl)

Look for members granted the role roles/resourcemanager.organizationAdmin and then manually verify that Security Key Enforcement has been enabled for each account. If not enabled, take it seriously and enable it immediately. By default, Security Key Enforcement is not enabled for Organization Administrators.

If an organization administrator loses access to their security key, the user may not be able to access their account. For this reason, it is important to configure backup security keys.

4. Prevent the use of user-managed service account keys

Anyone with access to the keys can access resources through the service account. GCP-managed keys are used by Cloud Platform services, such as App Engine and Compute Engine. These keys cannot be downloaded. Google holds the key and rotates it automatically almost every week.

On the other hand, user-managed keys are created, downloaded, and managed by the user and only expire 10 years after they are created.

User-managed keys can easily be compromised by common development practices, such as exposing them in source code, leaving them in the downloads directory, or accidentally showing them on support blogs or channels.

List all the service accounts:

gcloud iam service-accounts listCode language: Perl (perl)

Identify user-managed service accounts, as such account emails end with

iam.gserviceaccount.com.

For each user-managed service account, list the keys managed by the user:

gcloud iam service-accounts keys list --iam-account=SERVICE_ACCOUNT --managed-by=userCode language: Perl (perl)

No keys should be listed. If any key shows up in the list, you should delete it:

gcloud iam service-accounts keys delete --iam-account=SERVICE_ACCOUNT KEY_IDCode language: Perl (perl)

Please be aware that deleting user-managed service account keys may break communication with the applications using the corresponding keys.

As a prevention, you will want to disable service account key creation too.

Other GCP security IAM best practices include:

- Service accounts should not have Admin privileges.

- IAM users should not be assigned the Service Account User or Service Account Token Creator roles at project level.

- User-managed / external keys for service accounts (if allowed, see #4) should be rotated every 90 days or less.

- Separation of duties should be enforced while assigning service account related roles to users.

- Separation of duties should be enforced while assigning KMS related roles to users.

- API keys should not be created for a project.

- API keys should be restricted to use by only specified Hosts and Apps.

- API keys should be restricted to only APIs that the application needs access to.

- API keys should be rotated every 90 days or less.

Key Management Service (KMS)

GCP Cloud Key Management Service (KMS) is a cloud-hosted key management service that allows you to manage symmetric and asymmetric encryption keys for your cloud services in the same way as onprem. It lets you create, use, rotate, and destroy AES 256, RSA 2048, RSA 3072, RSA 4096, EC P256, and EC P384 encryption keys.

Some Google Cloud Platform security best practices you absolutely want to implement for KMS:

5. Check for anonymously or publicly accessible Cloud KMS keys

Anyone can access the dataset by granting permissions to allUsers or allAuthenticatedUsers. Such access may not be desirable if sensitive data is stored in that location.

In this case, make sure that anonymous and/or public access to a Cloud KMS encryption key is not allowed. By default, Cloud KMS does not allow access to allUsers or allAuthenticatedUsers.

List all Cloud KMS keys:

gcloud kms keys list --keyring=KEY_RING_NAME --location=global --format=json | jq '.[].name'Code language: Perl (perl)

Remove IAM policy binding for a KMS key to remove access to allUsers and allAuthenticatedUsers:

gcloud kms keys remove-iam-policy-binding KEY_NAME --keyring=KEY_RING_NAME --location=global --member=allUsers --role=ROLE

gcloud kms keys remove-iam-policy-binding KEY_NAME --keyring=KEY_RING_NAME --location=global --member=allAuthenticatedUsers --role=ROLECode language: Perl (perl)

The following is a Cloud Custodian rule for detecting the existence of anonymously or publicly accessible Cloud KMS keys:

- name: anonymously-or-publicly-accessible-cloud-kms-keys

description: |

It is recommended that the IAM policy on Cloud KMS cryptokeys should

restrict anonymous and/or public access.

resource: gcp.kms-cryptokey

filters:

- type: iam-policy

key: "bindings[*].members[]"

op: intersect

value: ["allUsers", "allAuthenticatedUsers"]Code language: Perl (perl)

6. Ensure that KMS encryption keys are rotated within a period of 90 days or less

Keys can be created with a specified rotation period. This is the time it takes for a new key version to be automatically generated. Since a key is used to protect some corpus of data, a collection of files can be encrypted using the same key, and users with decryption rights for that key can decrypt those files. Therefore, you need to make sure that the rotation period is set to a specific time.

A GCP security best practice is to establish this rotation period to 90 days or less:

gcloud kms keys update new --keyring=KEY_RING --location=LOCATION --rotation-period=90dCode language: Perl (perl)

By default, KMS encryption keys are rotated every 90 days. If you never modified this, you are good to go.

Cloud Storage

Google Cloud Storage lets you store any amount of data in namespaces called "buckets." These buckets are an appealing target for any attacker who wants to get hold of your data, so you must take great care in securing them.

These are a few of the GCP security best practices to implement:

7. Ensure that Cloud Storage buckets are not anonymously or publicly accessible

Allowing anonymous or public access gives everyone permission to access bucket content. Such access may not be desirable if you are storing sensitive data. Therefore, make sure that anonymous or public access to the bucket is not allowed.

List all buckets in a project:

gsutil lsCode language: Perl (perl)

Check the IAM Policy for each bucket returned from the above command:

gsutil iam get gs://BUCKET_NAMECode language: Perl (perl)

No role should contain allUsers or allAuthenticatedUsers as a member. If that's not the case, you'll want to remove them with:

gsutil iam ch -d allUsers gs://BUCKET_NAME

gsutil iam ch -d allAuthenticatedUsers gs://BUCKET_NAMECode language: Perl (perl)

Also, you might want to prevent Storage buckets from becoming publicly accessible by setting up the Domain restricted sharing organization policy.

8. Ensure that Cloud Storage buckets have uniform bucket-level access enabled

Cloud Storage provides two systems for granting users permissions to access buckets and objects. Cloud Identity and Access Management (Cloud IAM) and Access Control Lists (ACL). These systems work in parallel. Only one of the systems needs to grant permissions in order for the user to access the cloud storage resource.

Cloud IAM is used throughout Google Cloud and can grant different permissions at the bucket and project levels. ACLs are used only by Cloud Storage and have limited permission options, but you can grant permissions on a per-object basis (fine-grained).

Enabling uniform bucket-level access features disables ACLs on all Cloud Storage resources (buckets and objects), and allows exclusive access through Cloud IAM.

This feature is also used to consolidate and simplify the method of granting access to cloud storage resources. Enabling uniform bucket-level access guarantees that if a Storage bucket is not publicly accessible, no object in the bucket is publicly accessible either.

List all buckets in a project:

gsutil lsCode language: Perl (perl)

Verify that uniform bucket-level access is enabled for each bucket returned from the above command:

gsutil uniformbucketlevelaccess get gs://BUCKET_NAME/Code language: Perl (perl)

If uniform bucket-level access is enabled, the response looks like the following:

Uniform bucket-level access setting for gs://BUCKET_NAME/:

Enabled: True

LockedTime: LOCK_DATECode language: Perl (perl)

Should it not be enabled for a bucket, you can enable it with:

gsutil uniformbucketlevelaccess set on gs://BUCKET_NAME/Code language: Perl (perl)

You can also set up an Organization Policy to enforce that any new bucket has uniform bucket-level access enabled.

This is a Cloud Custodian rule to check for buckets without uniform-access enabled:

- name: check-uniform-access-in-buckets

description: |

It is recommended that uniform bucket-level access is enabled on

Cloud Storage buckets.

resource: gcp.bucket

filters:

- not:

- type: value

key: "iamConfiguration.uniformBucketLevelAccess.enabled"

value: trueCode language: Perl (perl)

Virtual Private Cloud (VPC)

Virtual Private Cloud provides networking for your cloud-based resources and services that is global, scalable, and flexible. It provides networking functionality to App Engine, Compute Engine or Google Kubernetes Engine (GKE) so you must take great care in securing them.

This is one of the best GCP security practices to implement:

9. Enable VPC Flow Logs for VPC Subnets

By default, the VPC Flow Logs feature is disabled when a new VPC network subnet is created. When enabled, VPC Flow Logs begin collecting network traffic data to and from your Virtual Private Cloud (VPC) subnets for network usage, network traffic cost optimization, network forensics, and real-time security analysis.

To increase the visibility and security of your Google Cloud VPC network, it's strongly recommended that you enable Flow Logs for each business-critical or production VPC subnet.

gcloud compute networks subnets update SUBNET_NAME --region=REGION --enable-flow-logsCode language: Perl (perl)

Compute Engine

Compunte Engine provides security and customizable compute service that lets you create and run virtual machines on Google's infrastructure.

Several GCP security best practices to implement as soon as possible:

10. Ensure Block Project-wide SSH keys' is enabled for VM instances

You can use your project-wide SSH key to log in to all Google Cloud VM instances running within your GCP project. Using SSH keys for the entire project makes it easier to manage SSH keys, but if leaked, they become a security risk that can affect all VM instances in the project. So, it is highly recommended to use specific SSH keys instead, reducing the attack surface if they ever get compromised.

By default, the Block Project-Wide SSH Keys security feature is not enabled for your Google Compute Engine instances.

To Block Project-Wide SSH keys, set the metadata value to TRUE:

gcloud compute instances add-metadata INSTANCE_NAME --metadata block-project-ssh-keys=trueCode language: Perl (perl)

The following is a Cloud Custodian sample rule to check for instances without this block:

- name: instances-without-project-wide-ssh-keys-block

description: |

It is recommended to use Instance specific SSH key(s) instead

of using common/shared project-wide SSH key(s) to access Instances.

resource: gcp.instance

filters:

- not:

- type: value

key: name

op: regex

value: '(gke).+'

- type: metadata

key: '"block-project-ssh-keys"'

value: "false"Code language: Perl (perl)

11. Ensure 'Enable connecting to serial ports' is not enabled for VM Instance

A Google Cloud virtual machine (VM) instance has four virtual serial ports. Interacting with a serial port is similar to using a terminal window in that the inputs and outputs are completely in text mode, and there is no graphical interface or mouse support. The instance's operating system, BIOS, and other system-level entities can often write output to the serial port and accept input such as commands and responses to prompts.

These system-level entities typically use the first serial port (Port 1), which is often referred to as the interactive serial console.

The interactive serial console does not support IP-based access restrictions, such as IP whitelists. When you enable the interactive serial console on an instance, clients can try to connect to it from any IP address. This allows anyone who knows the correct SSH key, username, project ID, zone, and instance name to connect to that instance. Therefore, to adhere to Google Cloud Platform security best practices, you should disable support for the interactive serial console.

gcloud compute instances add-metadata INSTANCE_NAME --zone=ZONE --metadata serial-port-enable=falseCode language: Perl (perl)

Also, you can prevent VMs from having interactive serial port access enabled by means of Disable VM serial port access organization policy.

12. Ensure VM disks for critical VMs are encrypted with Customer-Supplied Encryption Keys (CSEK

By default, the Compute Engine service encrypts all data at rest.

Cloud services manage this type of encryption without any additional action from users or applications. However, if you want full control over instance disk encryption, you can provide your own encryption key.

These custom keys, also known as Customer-Supplied Encryption Keys (CSEKs), are used by Google Compute Engine to protect the Google-generated keys used to encrypt and decrypt instance data. The Compute Engine service does not store CSEK on the server and cannot access protected data unless you specify the required key.

At the very least, business critical VMs should have VM disks encrypted with CSEK.

By default, VM disks are encrypted with Google-managed keys. They are not encrypted with Customer-Supplied Encryption Keys.

Currently, there is no way to update the encryption of an existing disk, so you should create a new disk with Encryption set to Customer supplied. A word of caution is necessary here:

If you lose your encryption key, you will not be able to recover the data.

In the gcloud compute tool, encrypt a disk using the --csek-key-file flag during instance creation. If you are using an RSA-wrapped key, use the gcloud beta component:

gcloud beta compute instances create INSTANCE_NAME --csek-key-file=key-file.jsonCode language: Perl (perl)

To encrypt a standalone persistent disk use:

gcloud beta compute disks create DISK_NAME --csek-key-file=key-file.jsonCode language: Perl (perl)

It is your duty to generate and manage your key. You must provide a key that is a 256-bit string encoded in RFC 4648 standard base64 to the Compute Engine. A sample key-file.json looks like this:

[

{

"uri": "https://www.googleapis.com/compute/v1/projects/myproject/zones/us-

central1-a/disks/example-disk",

"key": "acXTX3rxrKAFTF0tYVLvydU1riRZTvUNC4g5I11NY-c=",

"key-type": "raw"

},

{

"uri":

"https://www.googleapis.com/compute/v1/projects/myproject/global/snapshots/my

-private-snapshot",

"key":

"ieCx/NcW06PcT7Ep1X6LUTc/hLvUDYyzSZPPVCVPTVEohpeHASqC8uw5TzyO9U+Fka9JFHz0mBib

XUInrC/jEk014kCK/NPjYgEMOyssZ4ZINPKxlUh2zn1bV+MCaTICrdmuSBTWlUUiFoDD6PYznLwh8

ZNdaheCeZ8ewEXgFQ8V+sDroLaN3Xs3MDTXQEMMoNUXMCZEIpg9Vtp9x2oeQ5lAbtt7bYAAHf5l+g

JWw3sUfs0/Glw5fpdjT8Uggrr+RMZezGrltJEF293rvTIjWOEB3z5OHyHwQkvdrPDFcTqsLfh+8Hr

8g+mf+7zVPEC8nEbqpdl3GPv3A7AwpFp7MA=="

"key-type": "rsa-encrypted"

}

]Code language: Perl (perl)

Other GCP security best practices for Compute Engine include:

- Ensure that instances are not configured to use the default service account.

- Ensure that instances are not configured to use the default service account with full access to all Cloud APIs.

- Ensure oslogin is enabled for a Project.

- Ensure that IP forwarding is not enabled on Instances.

- Ensure Compute instances are launched with Shielded VM enabled.

- Ensure that Compute instances do not have public IP addresses.

- Ensure that App Engine applications enforce HTTPS connections.

Google Kubernetes Engine Service (GKE)

The Google Kubernetes Engine (GKE) provides a managed environment for deploying, managing, and scaling containerized applications using the Google infrastructure. A GKE environment consists of multiple machines (specifically, Compute Engine instances) grouped together to form a cluster. Continue with GCP security best practices at GKE.

13. Enable application-layer secrets encryption for GKE clusters

Application-layer secret encryption provides an additional layer of security for sensitive data, such as Kubernetes secrets stored on etcd. This feature allows you to use Cloud KMS managed encryption keys to encrypt data at the application layer and protect it from attackers accessing offline copies of etcd. Enabling application-layer secret encryption in a GKE cluster is considered a security best practice for applications that store sensitive data.

Create a key ring to store the CMK:

gcloud kms keyrings create KEY_RING_NAME --location=REGION --project=PROJECT_NAME --format="table(name)"Code language: Perl (perl)

Now, create a new Cloud KMS Customer-Managed Key (CMK) within the KMS key ring created at the previous step:

gcloud kms keys create KEY_NAME --location=REGION --keyring=KEY_RING_NAME --purpose=encryption --protection-level=software --rotation-period=90d --format="table(name)"Code language: Perl (perl)

And lastly, assign the Cloud KMS "CryptoKey Encrypter/Decrypter" role to the appropriate service account:

gcloud projects add-iam-policy-binding PROJECT_ID --member=serviceAccount:service-PROJECT_NUMBER@container-engine-robot.iam.gserviceaccount.com --role=roles/cloudkms.cryptoKeyEncrypterDecrypterCode language: Perl (perl)

The final step is to enable application-layer secrets encryption for the selected cluster, using the Cloud KMS Customer-Managed Key (CMK) created in the previous steps:

gcloud container clusters update CLUSTER --region=REGION --project=PROJECT_NAME --database-encryption-key=projects/PROJECT_NAME/locations/REGION/keyRings/KEY_RING_NAME/cryptoKeys/KEY_NAMECode language: Perl (perl)

14. Enable GKE cluster node encryption with customer-managed keys

To give you more control over the GKE data encryption / decryption process, make sure your Google Kubernetes Engine (GKE) cluster node is encrypted with a customer-managed key (CMK). You can use the Cloud Key Management Service (Cloud KMS) to create and manage your own customer-managed keys (CMKs). Cloud KMS provides secure and efficient cryptographic key management, controlled key rotation, and revocation mechanisms.

At this point, you should already have a key ring where you store the CMKs, as well as customer-managed keys. You will use them here too.

To enable GKE cluster node encryption, you will need to re-create the node pool. For this, use the name of the cluster node pool that you want to re-create as an identifier parameter and custom output filtering to describe the configuration information available for the selected node pool:

gcloud container node-pools describe NODE_POOL --cluster=CLUSTER_NAME --region=REGION --format=jsonCode language: Perl (perl)

Now, using the information returned in the previous step, create a new Google Cloud GKE cluster node pool, encrypted with your customer-managed key (CMK):

gcloud beta container node-pools create NODE_POOL --cluster=CLUSTER_NAME --region=REGION --disk-type=pd-standard --disk-size=150 --boot-disk-kms-key=projects/PROJECT/locations/REGION/keyRings/KEY_RING_NAME/cryptoKeys/KEY_NAMECode language: Perl (perl)

Once your new cluster node pool is working properly, you can delete the original node pool to stop adding invoices to your Google Cloud account.

Take good care to delete the old pool and not the new one!

gcloud container node-pools delete NODE_POOL --cluster=CLUSTER_NAME --region=REGIONCode language: Perl (perl)

15. Restrict network access to GKE clusters

To limit your exposure to the Internet, make sure your Google Kubernetes Engine (GKE) cluster is configured with a master authorized network. Master authorized networks allow you to whitelist specific IP addresses and/or IP address ranges to access cluster master endpoints using HTTPS.

Adding a master authorized network can provide network-level protection and additional security benefits to your GKE cluster. Authorized networks allow access to a particular set of trusted IP addresses, such as those originating from a secure network. This helps protect access to the GKE cluster if the cluster's authentication or authorization mechanism is vulnerable.

Add authorized networks to the selected GKE cluster to grant access to the cluster master from the trusted IP addresses / IP ranges that you define:

gcloud container clusters update CLUSTER_NAME --zone=REGION --enable-master-authorized-networks --master-authorized-networks=CIDR_1,CIDR_2,...Code language: Perl (perl)

In the previous command, you can specify multiple CIDRs (up to 50) separated by a comma.

The above are the most important best practices for GKE, since not adhering to them poses a high risk, but there are other security best practices you might want to adhere to:

- Enable auto-repair for GKE cluster nodes.

- Enable auto-upgrade for GKE cluster nodes.

- Enable integrity monitoring for GKE cluster nodes.

- Enable secure boot for GKE cluster nodes.

- Use shielded GKE cluster nodes.

Cloud Logging is a fully managed service that allows you to store, search, analyze, monitor, and alert log data and events from Google Cloud and Amazon Web Services. You can collect log data from over 150 popular application components, onprem systems, and hybrid cloud systems.

There are more GCP security best practices focus on Cloud Logging:

16. Ensure that Cloud Audit Logging is configured properly across all services and all users from a project

Cloud Audit Logging maintains two audit logs for each project, folder, and organization:

Admin Activity and Data Access. Admin Activity logs contain log entries for API calls or other administrative actions that modify the configuration or metadata of resources. These are enabled for all services and cannot be configured. On the other hand, Data Access audit logs record API calls that create, modify, or read user-provided data. These are disabled by default and should be enabled.

It is recommended to have an effective default audit config configured in such a way that you can log user activity tracking, as well as changes (tampering) to user data. Logs should be captured for all users.

For this, you will need to edit the project's policy. First, download it as a yaml file:

gcloud projects get-iam-policy PROJECT_ID > /tmp/project_policy.yamlCode language: Perl (perl)

Now, edit /tmp/project_policy.yaml adding or changing only the audit logs configuration to the following:

auditConfigs:

- auditLogConfigs:

- logType: DATA_WRITE

- logType: DATA_READ

service: allServicesCode language: Perl (perl)

Please note that exemptedMembers is not set as audit logging should be enabled for all the users. Last, update the policy with the new changes:

gcloud projects set-iam-policy PROJECT_ID /tmp/project_policy.yamlCode language: Perl (perl)

Enabling the Data Access audit logs might result in your project being charged for the additional logs usage.

#17 Ensure that sinks are configured for all log entries

You will also want to create a sink that exports a copy of all log entries. This way, you can aggregate logs from multiple projects and export them to a Security Information and Event Management (SIEM).

Exporting involves creating a filter to select the log entries to export and selecting the destination in Cloud Storage, BigQuery, or Cloud Pub/Sub. Filters and destinations are kept in an object called a sink. To ensure that all log entries are exported to the sink, make sure the filter is not configured.

To create a sink to export all log entries into a Google Cloud Storage bucket, run the following command:

gcloud logging sinks create SINK_NAME storage.googleapis.com/BUCKET_NAMECode language: Perl (perl)

This will export events to a bucket, but you might want to use Cloud Pub/Sub or BigQuery instead.

This is an example of a Cloud Custodian rule to check that the sinks are configured with no filter:

- name: check-no-filters-in-sinks

description: |

It is recommended to create a sink that will export copies of

all the log entries. This can help aggregate logs from multiple

projects and export them to a Security Information and Event

Management (SIEM).

resource: gcp.log-project-sink

filters:

- type: value

key: filter

value: emptyCode language: Perl (perl)

18. Ensure that retention policies on log buckets are configured using Bucket Lock

You can enable retention policies on log buckets to prevent logs stored in cloud storage buckets from being overwritten or accidentally deleted. It is recommended that you set up retention policies and configure bucket locks on all storage buckets that are used as log sinks, per the previous best practice.

To list all sinks destined to storage buckets:

gcloud logging sinks list --project=PROJECT_IDCode language: Perl (perl)

For each storage bucket listed above, set a retention policy and lock it:

gsutil retention set TIME_DURATION gs://BUCKET_NAME

gsutil retention lock gs://BUCKET_NAMECode language: Perl (perl)

Bucket locking is an irreversible action. Once you lock a bucket, you cannot remove the retention policy from it or shorten the retention period.

19. Enable logs router encryption with customer-managed keys

Make sure your Google Cloud Logs Router data is encrypted with a customer-managed key (CMK) to give you complete control over the data encryption and decryption process, as well as to meet your compliance requirements.

You will want to add a policy, binding to the IAM policy of the CMK, to assign the Cloud KMS "CryptoKey Encrypter/Decrypter" role to the necessary service account. Here, you'll use the keyring and the CMK already created in #13.

gcloud kms keys add-iam-policy-binding KEY_ID --keyring=KEY_RING_NAME --location=global --member=serviceAccount:PROJECT_NUMBER@gcp-sa-logging.iam.gserviceaccount.com --role=roles/cloudkms.cryptoKeyEncrypterDecrypterCode language: Perl (perl)

Cloud SQL

Cloud SQL is a fully managed relational database service for MySQL, PostgreSQL, and SQL Server. Run the same relational databases you know with their rich extension collections, configuration flags and developer ecosystem, but without the hassle of self management.

GCP security best practices focus on Cloud SQL:

20. Ensure that the Cloud SQL database instance requires all incoming connections to use SSL

SQL database connection may reveal sensitive data such as credentials, database queries, query output, etc. if tapped (MITM). For security reasons, it's recommended that you always use SSL encryption when connecting to your PostgreSQL, MySQL generation 1, and MySQL generation 2 instances.

To enforce SSL encryption for an instance, run the command:

gcloud sql instances patch INSTANCE_NAME --require-sslCode language: Perl (perl)

Additionally, MySQL generation 1 instances will require to be restarted for this configuration to get in effect.

This Cloud Custodian rule can check for instances without SSL enforcement:

- name: cloud-sql-instances-without-ssl-required

description: |

It is recommended to enforce all incoming connections to

SQL database instance to use SSL.

resource: gcp.sql-instance

filters:

- not:

- type: value

key: "settings.ipConfiguration.requireSsl"

value: trueCode language: Perl (perl)

21. Ensure that Cloud SQL database instances are not open to the world

Only trusted / known required IPs should be whitelisted to connect in order to minimize the attack surface of the database server instance. The allowed networks must not have an IP / network configured to 0.0.0.0/0 that allows access to the instance from anywhere in the world. Note that allowed networks apply only to instances with public IPs.

gcloud sql instances patch INSTANCE_NAME --authorized-networks=IP_ADDR1,IP_ADDR2...Code language: Perl (perl)

To prevent new SQL instances from being configured to accept incoming connections from any IP addresses, set up a Restrict Authorized Networks on Cloud SQL instances Organization Policy.

22. Ensure that Cloud SQL database instances do not have public IPs

To lower the organization's attack surface, Cloud SQL databases should not have public IPs. Private IPs provide improved network security and lower latency for your application.

For every instance, remove its public IP and assign a private IP instead:

gcloud beta sql instances patch INSTANCE_NAME --network=VPC_NETWORK_NAME --no-assign-ipCode language: Perl (perl)

To prevent new SQL instances from getting configured with public IP addresses, set up a Restrict Public IP access on Cloud SQL instances Organization policy.

23. Ensure that Cloud SQL database instances are configured with automated backups

Backups provide a way to restore a Cloud SQL instance to retrieve lost data or recover from problems with that instance. Automatic backups should be set up for all instances that contain data needing to be protected from loss or damage. This recommendation applies to instances of SQL Server, PostgreSql, MySql generation 1, and MySql generation 2.

List all Cloud SQL database instances using the following command:

gcloud sql instances listCode language: Perl (perl)

Enable Automated backups for every Cloud SQL database instance:

gcloud sql instances patch INSTANCE_NAME --backup-start-time [HH:MM]Code language: Perl (perl)

The backup-start-time parameter is specified in 24-hour time, in the UTC±00 time zone, and specifies the start of a 4-hour backup window. Backups can start any time during this backup window.

By default, automated backups are not configured for Cloud SQL instances. Data backup is not possible on any Cloud SQL instance unless Automated Backup is configured.

There are other Cloud SQL best practices to take into account that are specific for MySQL, PostgreSQL, or SQL Server, but the aforementioned four are arguably the most important.

BigQuery

BigQuery is a serverless, highly-scalable, and cost-effective cloud data warehouse with an in-memory BI Engine and machine learning built in. As in the other sections, GCP security best practices

24. Ensure that BigQuery datasets are not anonymously or publicly accessible

You don't want to allow anonymous or public access in your BigQuery dataset's IAM policies. Anyone can access the dataset by granting permissions to allUsers or allAuthenticatedUsers. Such access may not be desirable if sensitive data is stored on the dataset. Therefore, make sure that anonymous and/or public access to the dataset is not allowed.

To do this, you will need to edit the data set information. First you need to retrieve said information into your local filesystem:

bq show --format=prettyjson PROJECT_ID:DATASET_NAME > dataset_info.jsonCode language: Perl (perl)

Now, in the access section of dataset_info.json, update the dataset information to remove all roles containing allUsers or allAuthenticatedUsers.

Finally, update the dataset:

bq update --source=dataset_info.json PROJECT_ID:DATASET_NAMECode language: Perl (perl)

You can prevent BigQuery dataset from becoming publicly accessible by setting up the Domain restricted sharing organization policy.

Compliance Standards & Benchmarks

Setting up all the detection rules and maintaining your GCP environment to keep it secure is an ongoing effort that can take a big chunk of your time — even more so if you don't have some kind of roadmap to guide you during this continuous work.

You will be better off following the compliance standard(s) relevant to your industry, since they provide all the requirements needed to effectively secure your cloud environment.

Because of the ongoing nature of securing your infrastructure and complying with a security standard, you might also want to recurrently run benchmarks, such as CIS Google Cloud Platform Foundation Benchmark, which will audit your system and report any unconformity it might find.

Conclusion

Jumping to the cloud opens a new world of possibilities, but it also requires learning a new set of Google Cloud Platform security best practices.

Each new cloud service you leverage has its own set of potential dangers you need to be aware of.

Luckily, cloud native security tools like Falco and Cloud Custodian can guide you through these Google Cloud Platform security best practices, and help you meet your compliance requirements.

%201.svg)