Falco Feeds extends the power of Falco by giving open source-focused companies access to expert-written rules that are continuously updated as new threats are discovered.

Etcd is the backend store for all the Kubernetes cluster related data. It is undoubtedly a key component in the Kubernetes infrastructure. Monitoring etcd properly is of vital importance because if you fail to observe the Kubernetes etcd, you’ll probably fail to prevent issues too. In that case, you can get into some serious trouble.

If the etcd quorum is lost, and the etcd consequently cluster fails, you won’t be able to make changes to the Kubernetes current state. No new pods will be scheduled, among many other problems. Big latencies between the etcd nodes, disk performance issues, or high throughput are some of the common root causes of availability problems with etcd.

How to monitor etcd is a hot topic for those companies that are on their Kubernetes and cloud native journey. Fortunately, the Kubernetes etcd is instrumented to provide the etcd metrics out of the box. From either a DIY Prometheus instance or a Prometheus managed service, you can scrape the etcd metrics and take control of one of the most critical components in your Kubernetes cluster.

If you want to learn how to monitor etcd, which metrics you should check and what they mean, and prevent issues with the Kubernetes etcd…

You are in the right place 👌. Keep reading!

This article will cover the following topics:

What is etcd?

Etcd is a distributed key-value open source database that provides a reliable way to store data that needs to be accessed from distributed systems or clusters of machines. When talking about Kubernetes, it can be said that etcd is the cornerstone of the cluster. Kubernetes leverages the etcd distributed database to store its REST API objects (under the /registry directory key): Pods, Services, Ingress, and everything else is tracked and stored in the etcd in a key-value format.

So, what would happen if the Kubernetes etcd goes down?

In the event of etcd quorum being lost and a new leader can’t be elected, the current Pods and workloads would keep running in your Kubernetes cluster. However, no new changes can be made, even new Pods can’t be scheduled.

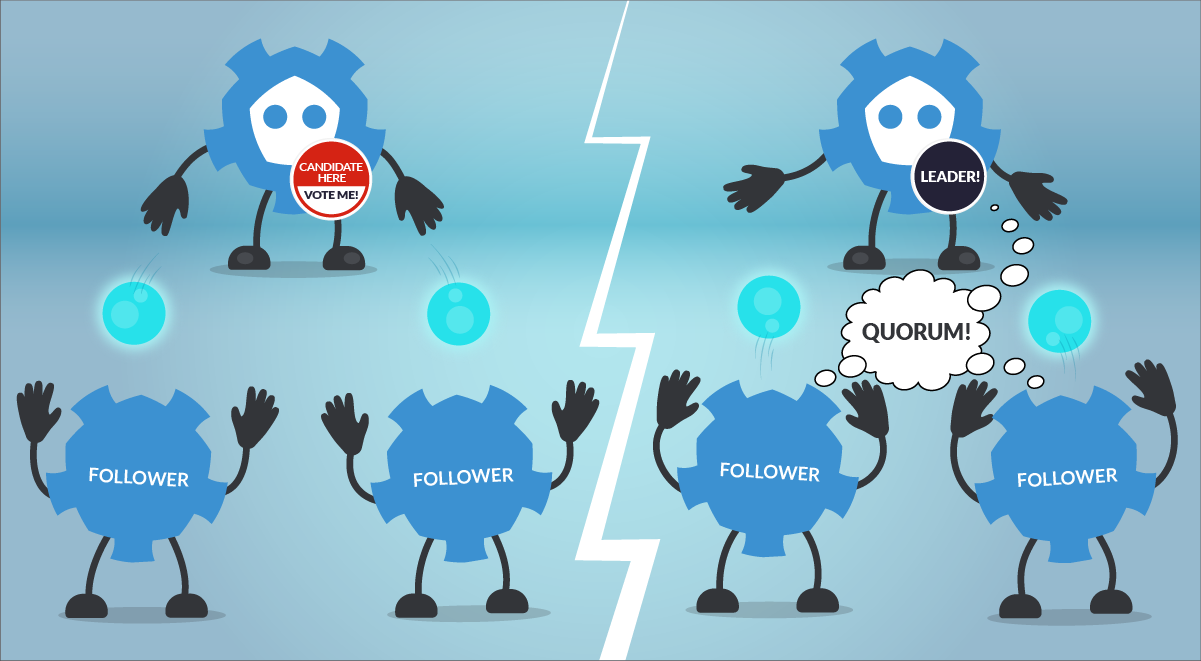

In terms of leader election and “consensus”, etcd uses the RAFT algorithm. This is a method to achieve convergence over a distributed and fault-tolerant set of cluster nodes. Basically, what you need to know about how the RAFT helps to elect a leader in etcd is:

Node status can be one of the following:

- Follower

- Candidate

- Leader

How does the election process work?

- If a Follower cannot locate the current Leader, it will become a Candidate.

- The voting system will elect a new Leader among the Candidates.

- Registry values updates (commit) always goes through the Leader. However, clients don’t know which node is the Leader. If a Follower receives a request that needs consensus, it will forward this request automatically to the Leader.

- Once the Leader has received the ack from the majority of the Followers, the new value is considered “committed”.

- The cluster will survive as long as most of the nodes remain alive.

Perhaps the most remarkable features of etcd are:

- The straightforward way of accessing the service using REST-like HTTP calls.

- Its master-master protocol, which automatically elects the cluster Leader and provides a fallback mechanism to switch this role if needed.

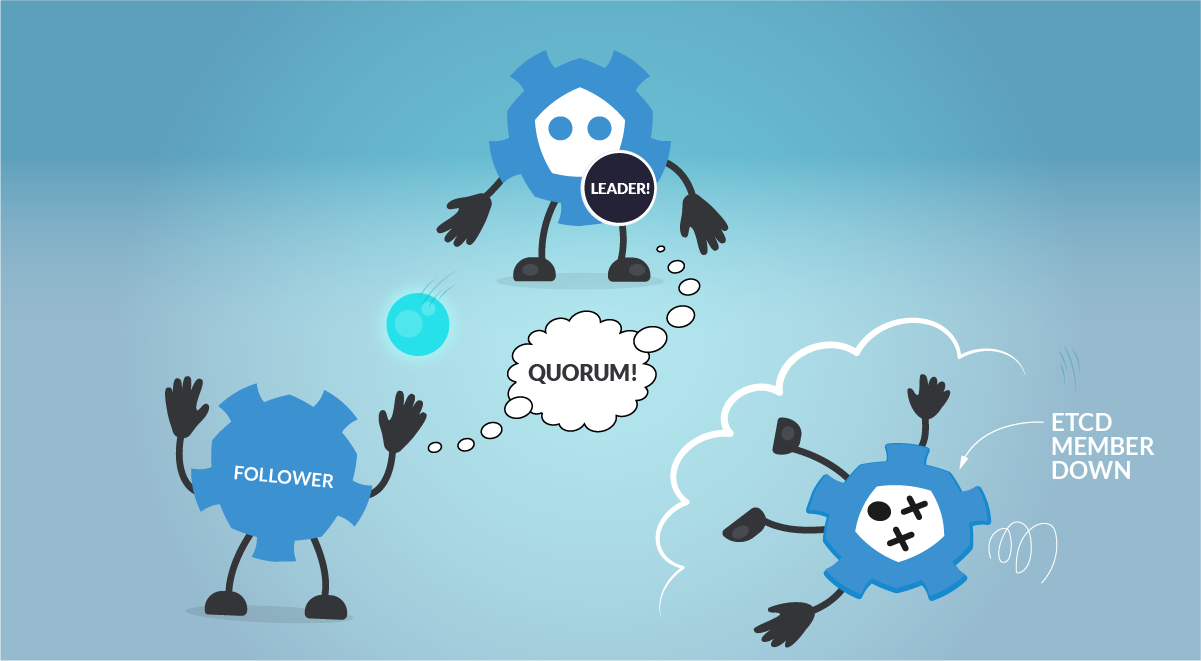

When running etcd to store data for your distributed systems or Kubernetes clusters, it is recommended to use an odd number of nodes. Quorum needs a majority of nodes in the cluster to agree on updates to the cluster state. For a cluster with n number of nodes, the quorum needed to make up a cluster is (n/2)+1. For example:

- For a 3 nodes cluster, quorum will be achieved with 2 nodes. (Failure tolerance 1 node)

- For a 4 nodes cluster, quorum will be achieved with 3 nodes. (Failure tolerance 1 node)

- For a 5 nodes cluster, quorum will be achieved with 3 nodes. (Failure tolerance 2 nodes)

An odd-size cluster tolerates the same number of failures as an even-size cluster, but with fewer nodes. In addition, in the event of a network partition, an odd number of nodes guarantees that there will always be a majority partition, avoiding the frightening split-brain scenario. This way, the etcd cluster can keep operating and being the source of truth when the network partition is resolved.

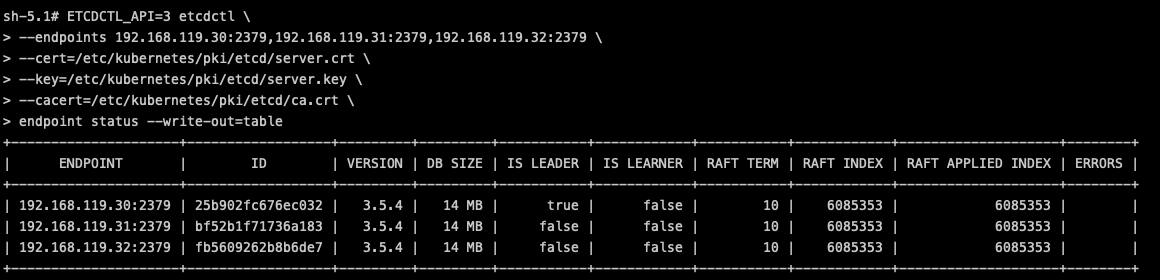

Lastly, let’s see how to operate with the etcd by using the etcdctl CLI tool. It can be useful to get information about the health of the etcd members:

Or, you can even browse the data directly in the etcd backend:

How to monitor etcd

As mentioned in the previous section, etcd is instrumented, exposes and provides its metrics endpoint, and is accessible in every master host. Users can easily scrape metrics from this endpoint, without the need of scripts or additional exporters. Just curl the metrics endpoint and get all the Kubernetes etcd metrics related data.

This endpoint is secured, though. The official port for etcd client requests, the same one that you need to get access to the /metrics endpoint, is 2379. So, if you want to scrape metrics from the etcd /metrics endpoint, you need to have access to the Kubernetes etcd client port and possess the etcd client certificates.

Let’s check one of the Kubernetes etcd Pod yaml definitions, specifically the endpoint ports used by the Kubernetes etcd.

Here, you can see the –listen-client-urls parameter which identifies the endpoint you should use to reach the /metrics endpoint, and the –cert-file and –key-file certificates you need to access this secured endpoint.

Getting access to the endpoint manually

If you try to access the /metrics endpoint without these certificates, you’ll soon realize that it is not possible to reach the endpoint.

What do you need to get your metrics from etcd then?

It’s simple, grab the etcd certificates from your master node and curl the Kubernetes etcd endpoint.

Note: In order to be trusted, you have to use the etcd CA certificate (ca.crt) or use -k to bypass the validation.

How to configure Prometheus to scrape etcd metrics

Once you have tested that the Kubernetes etcd metrics endpoint is accessible and that you can get the etcd metrics manually, it’s time to configure your Prometheus instance, or your managed Prometheus service, for scrapping the etcd metrics.

First, you need to create a new secret in the monitoring namespace. This secret will mount the etcd certificates (the same were used in the previous section) that you’ll need to scrape metrics from the etcd metrics endpoint. Patch the prometheus-server Deployment with that secret you just created.

Disclaimer: The etcd is the core of any Kubernetes cluster. If you don’t take care with the certificates, you can expose the entire cluster and potentially be a target.

Assuming you already have your DIY Prometheus instance or a managed Prometheus service, you need to get the current prometheus-server ConfigMap, and add a new scrape_config for etcd metrics. Add the etcd job under the scrape_configs section.

Replace the ConfigMap with the new one that includes the etcd endpoint and recreate the Prometheus pod to apply this new configuration.

Now, you can check and query any of the Kubernetes etcd metrics scraped from the Prometheus server.

Monitoring etcd: Which metrics should you check?

Let’s go with the most important etcd metrics you should monitor in your Kubernetes environment.

Disclaimer: etcd metrics might differ between Kubernetes versions. Here, we used Kubernetes 1.25 and etcd 3.25. You can check the latest metrics available for etcd in the official etcd documentation.

Etcd server metrics

Following, you’ll find a summary of the key etcd server metrics. These will give you visibility on the availability of the etcd cluster.

etcd node availability: When talking about clusters, it may happen that a node goes down suddenly for any reason. So, it is always a good idea to monitor the availability of the nodes that make up the cluster. You just need to run a PromQL like the following to count how many nodes are active in your cluster.

etcd_server_has_leader: This metric indicates whether the etcd nodes have a leader or not. If the value is 1, then there is a leader in the cluster. On the other hand, if the value is 0, it means there is no leader elected in the cluster. In that case, the etcd would be not operational.

-

etcd_server_leader_changes_seen_total: In an etcd cluster, the leader can change automatically thanks to the RAFT algorithm. But this doesn’t necessarily mean that it is good or appropriate for the normal operation of the etcd cluster. If this metric is growing continuously over time, it could indicate a performance or network problem in the cluster.

You can check the etcd leader changes within the last hour. If this number grows over time, it may similarly indicate performance or network problems in the cluster.

etcd_server_proposals_committed_total: A consensus proposal is a request, like a write request to add a new configuration or track a new state. It can be a change in a configuration, like aConfigMapor any other Kubernetes object. This metric should increase over time, as it indicates the cluster is healthy and committing changes. The different nodes may report different values, but this is expected and happens for various reasons, like having to recover from peers after restarting or for being the leader and having the most commits. It is important to monitor this metric across all the etcd members since a large consistent lag between a member and its leader may indicate the node is unhealthy or having performance issues.

etcd_server_proposals_applied_total: This metric records the total number of requests applied. This number may differ frometcd_server_proposals_committed_totalsince the etcd applies every commit asynchronously. This difference should be small. If it isn’t, or if it grows over time, that may indicate the etcd server is overloaded.

etcd_server_proposals_pending: It indicates how many requests are queued to commit. A high number or a value growing over time may indicate there is a high load or that the etcd member cannot commit changes.

etcd_server_proposals_failed_total: Failures with requests are basically due to a leader election process (where it is not able to commit changes) or a downtime caused by loss of quorum.

You can monitor how many proposals failed within the last hour.

Etcd disk metrics

Etcd is a database which stores Kubernetes objects status and definitions, configurations, etc. As a persistent database, it needs to back this data up in storage. Monitoring the etcd disk metrics is key to get a better understanding about how the etcd is performing, and to anticipate any problem that may come up.

High latencies in both metrics may indicate disk issues, and may cause a high latency on etcd requests or even make the cluster unstable and/or unavailable.

etcd_disk_wal_fsync_duration_seconds_bucket: This metric represents the latencies of persisting (fsync operation) its etcd log entries/WAL (write ahead of log) to disk before applying them.

etcd_disk_backend_commit_duration_seconds_bucket: Latencies while etcd is committing an incremental snapshot of its most recent changes to disk.

To know if the latency of the backend commits are good enough, you can visualize in a histogram. Run the following PromQL query and get the time latency in which 99% of requests are covered.

Etcd network metrics

This section describes the best metrics you can use to monitor the network status and activity around your etcd cluster.

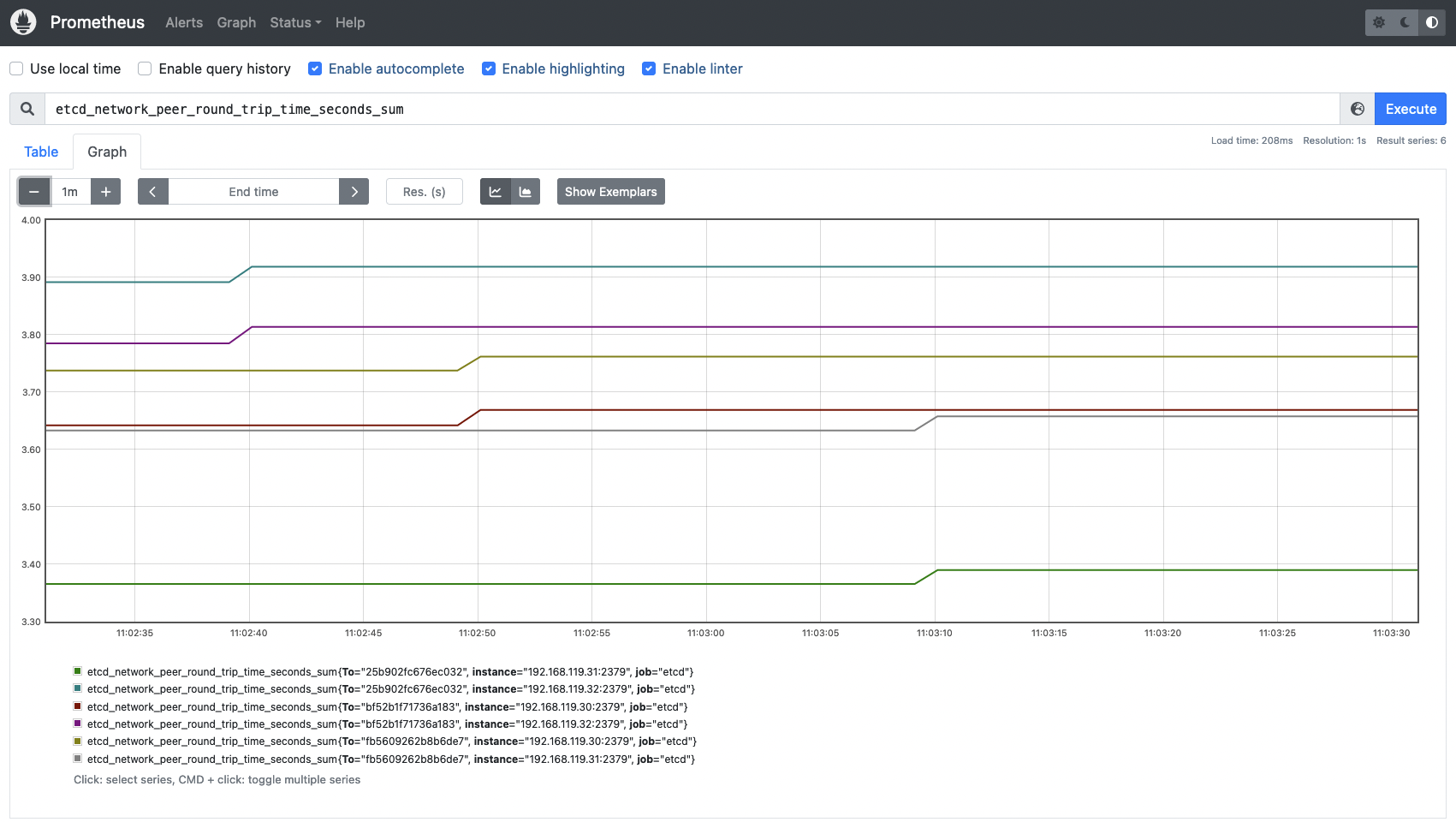

etcd_network_peer_round_trip_time_seconds_bucket: This metric is key and extremely important to measure how your network and etcd nodes are performing. It indicates the RTT (round trip time) latency for etcd to replicate a request between etcd members. A high latency or latencies growing over time may indicate issues in your network, causing serious trouble with etcd requests and even losing quorum. There is one complete histogram per communication (from peer to peer). This value should not exceed 50ms (0.050s).

To know if the RTT latencies between etcd nodes are good enough, run the following query to visualize the data in a histogram.

etcd_network_peer_sent_failures_total: This metric provides the total number of failures sent by peer or etcd members. It can help you understand better whether a specific node is facing performance or network issues.etcd_network_peer_received_failures_total: In the same way the previous metric provided sent failures, this time, what is measured is the total received failures by peers.

Conclusion

Etcd is a key component of Kubernetes. Without an etcd up and running, your Kubernetes cluster will not persist any change and no new Pods will be scheduled.

Monitoring etcd is a complex topic. While etcd exposes its metrics endpoint out of the box, there is not much documentation and public knowledge about what the key etcd metrics are, and what the appropriate or expected values you should expect for a healthy environment are.

In this article, you have learned how to monitor your etcd cluster and how to scrape its metrics from a Prometheus instance. In terms of etcd metrics, you have learned more about what you should look for, and how to measure the availability and performance of your etcd cluster.

Monitor Kubernetes and troubleshoot issues up to 10x faster

Sysdig can help you monitor and troubleshoot your Kubernetes cluster with the out-of-the-box dashboards included in Sysdig Monitor. Advisor, a tool integrated in Sysdig Monitor accelerates troubleshooting of your Kubernetes clusters and its workloads by up to 10x.

Sign up for a 30-day trial account and try it yourself!