What Is CNAPP? [The Definitive Guide]

CNAPP is a unified security platform that protects cloud-native applications across their full lifecycle from development through production.

Traditional security tools address individual problems in isolation. CNAPP consolidates those functions into a single integrated platform, providing continuous visibility across code, infrastructure, workloads, and runtime.

What do people really mean by “CNAPP” today?

When vendors say "CNAPP," they don't all mean the same thing.

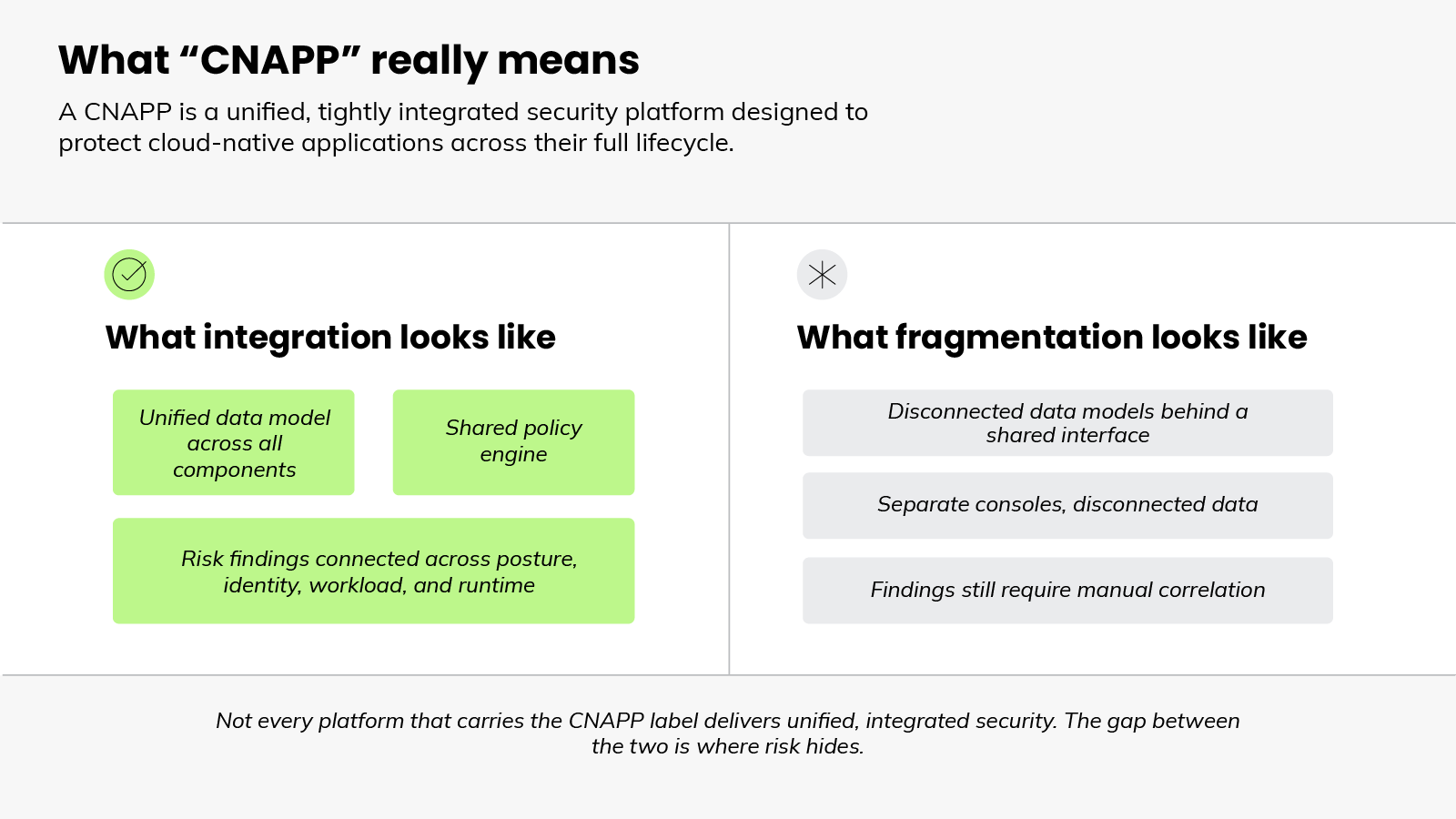

But the term has a precise definition. A true CNAPP is a unified, tightly integrated platform that connects posture, identity, workload, and runtime into a single, coherent view of risk. Not a collection of tools that happen to share a dashboard. And that distinction matters more than it might seem.

Here's why.

As the category grew over time, more vendors adopted the label without fully meeting the standard.

Some platforms are strong on posture management but thin on runtime protection. Others have grown through expansion, with underlying data models that remain disconnected and policy engines that don't communicate: Underlying data models are disconnected. Policy engines don't talk to each other. Teams are left connecting the dots themselves.

In other words: The name tells you less than you'd expect.

Not every platform that carries the CNAPP label connects posture, identity, vulnerability, and runtime findings into a unified risk picture. How well those capabilities are integrated varies significantly from vendor to vendor.

That's the gap worth understanding before evaluating any platform in this category.

The CNAPP label is a starting point. Not a guarantee.

What created the need for CNAPP?

CNAPP emerged because securing cloud-native environments with separate, disconnected tools stopped working.

Early cloud security focused on two distinct problems:

The first was configuration.

As organizations moved infrastructure to the cloud, misconfigurations became a leading cause of exposure. Open storage buckets, excessive permissions, and non-compliant settings were common sources of risk. Cloud security posture management (CSPM) tools emerged specifically to find and fix these issues.

The second problem was workload protection.

Containers, virtual machines, and serverless functions needed runtime monitoring and threat detection. Cloud workload protection platforms (CWPP) addressed that.

Two problems. Two tools. And on the surface, that seemed reasonable.

However: Each tool operated in its own silo. CSPM had visibility into configuration. CWPP had visibility into workloads.

Neither had full sight of both. And neither could connect what it saw to what the other was seeing.

Identity management, vulnerability scanning, and pipeline security added further categories. Each with its own tool, its own console, and its own incomplete view of risk.

That gap created real risk.

For example: An attacker exploiting a misconfigured Kubernetes cluster doesn't stay neatly within the boundaries of a posture tool. They move laterally, across services, through identities, into workloads.

A security team with disconnected tools sees fragments of that activity. Not the full picture.

Put simply: The fragmentation wasn't just an operational inconvenience. It was a structural blind spot.

And as cloud environments grew more complex — more containers, more services, more ephemeral workloads, more attack surface — the cost of that blind spot increased.

That's what created the need for CNAPP. Not convenience. Necessity.

What are the core components of a CNAPP?

A CNAPP is defined by its components, but it's only as useful as the integration between them.

Most platforms in this category include some combination of the same core capabilities. Knowing what each one does is useful. How they work together is what actually matters.

First there’s the posture domain, which is focused on what’s misconfigured.

- Cloud security posture management (CSPM) continuously monitors cloud infrastructure for misconfigurations, compliance violations, and risky settings.

- Kubernetes security posture management (KSPM) does the same specifically for container orchestration environments. It’s focused on auditing cluster configurations, detecting drift, and flagging policy violations.

- Infrastructure as code (IaC) scanning extends that posture visibility earlier, into the development pipeline itself, catching misconfigurations before they ever reach a running environment.

Then there are the identity tools that find what’s overpermissioned.

- Cloud infrastructure entitlement management (CIEM) addresses the identity function: who and what has access to which resources, whether those permissions are excessive, and where privilege creep has accumulated over time.

- Data security posture management (DSPM) extends visibility into where sensitive data lives, how it's being accessed, and whether it's adequately protected.

- As AI workloads become more prevalent, AI security posture management (AI-SPM) is emerging as an additional function. It’s centered on securing the models, pipelines, and infrastructure that underpin AI-driven applications.

Next, there's the workload domain, which surfaces what's vulnerable or actively compromised.

- Cloud workload protection platforms (CWPP) secure running workloads like virtual machines, containers, and serverless functions. This is where vulnerability scanning, threat detection, and behavioral monitoring operate against live production environments.

Finally, detection covers what's vulnerable or actively under attack.

- Cloud detection and response (CDR) is the operational function that ties everything together. CDR monitors for active threats across cloud environments in real time. It correlates signals from workloads, identities, and infrastructure to detect attacks in progress and support rapid response.

The takeaway: The grouping matters because each of these capabilities addresses a different dimension of cloud risk. Alone, each produces a partial view. Together — when genuinely integrated — they produce a complete one.

What posture scanning misses (and why runtime fills the gap)

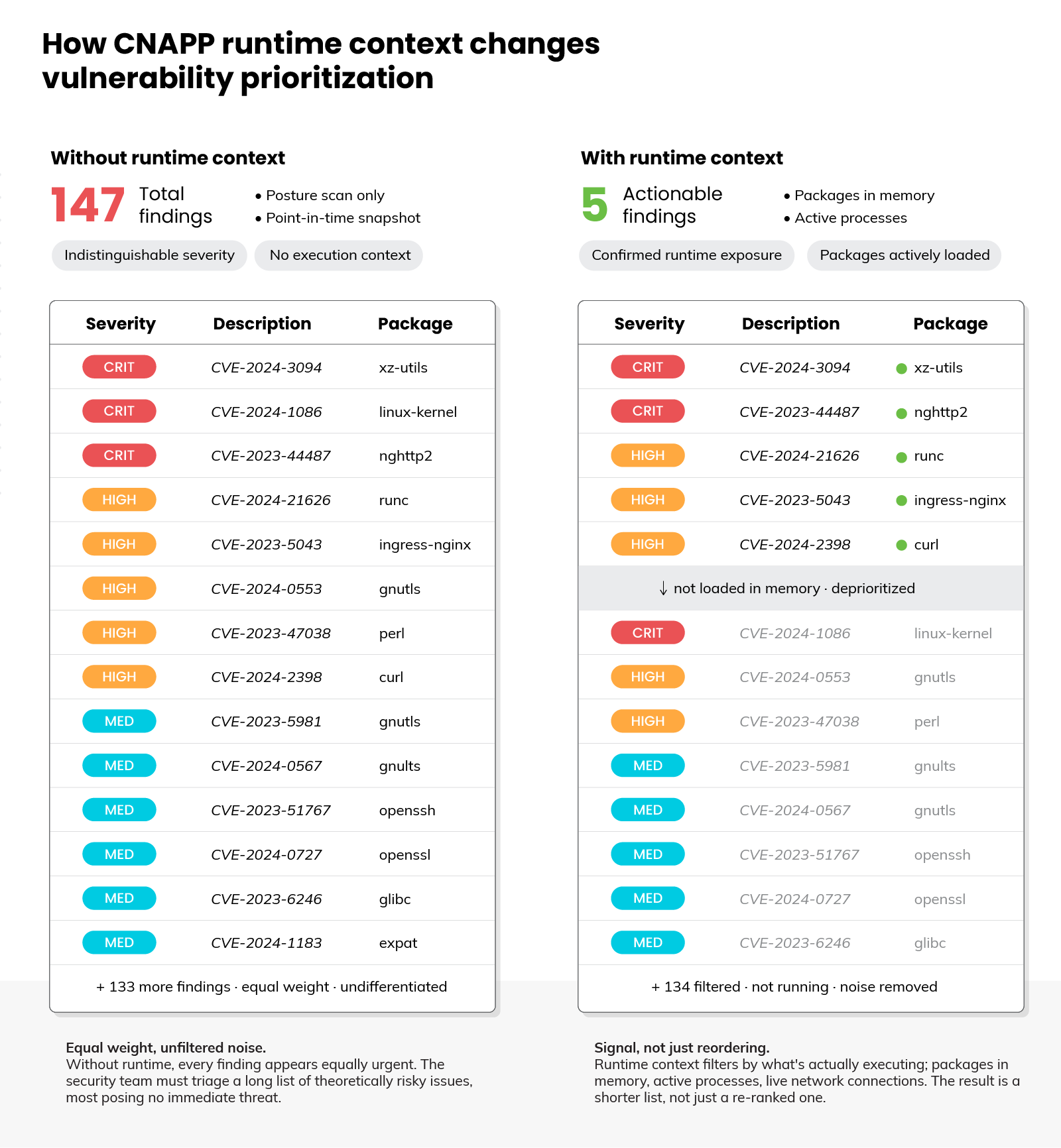

Most security teams running a CNAPP aren't short on findings. They're short on clarity about which ones actually matter.

That's largely a posture problem. Here’s why.

Posture scanning is point-in-time. It checks configurations, flags misconfigurations, and measures compliance against known benchmarks.

Useful, but it produces a snapshot. And a snapshot can't tell you what's actively running, what's actively exploited, or what's actively under attack right now.

Here's why that matters.

A vulnerability can exist in hundreds of packages across an environment. But only a fraction of those packages are actually loaded into memory and running. Without runtime visibility, every finding looks equally urgent. Which means security teams end up triaging a long list of theoretically risky issues, most of which pose no immediate threat.

Runtime context changes that.

When a platform can see what's actually executing, like packages in use, processes running, and active connections — it can filter findings through that lens. The result isn't just a reordered list. It's a shorter one. Runtime doesn't add more signal. It removes noise.

How much runtime visibility a platform delivers depends on how it's deployed.

- Agent-based instrumentation provides continuous, in-process visibility into file access, network activity, and active processes in real time.

- Agentless approaches deploy faster and provide broad coverage without operational overhead. But they work from snapshots, not live streams.

- In practice, neither is sufficient alone. The most effective implementations use both: agentless for breadth and speed, agents for the depth that makes real-time detection reliable.

Runtime visibility is powerful, but it works best as part of a layered approach.

Shift-left security catches issues before they reach production. Runtime catches what gets through. The two approaches work together.

Not every threat originates in code, though. Some emerge from how infrastructure evolves, how permissions accumulate, and how attackers move once they're inside. That's the ground runtime covers.

How attack path analysis connects risk across your environment

Earlier we established that the value of a CNAPP comes from integration, not the component list. Attack path analysis is where that integration becomes concrete.

A CNAPP that consolidates findings without connecting them hasn't fully solved the fragmentation problem. The findings are still isolated. Just in one place instead of five.

That's where attack path analysis comes in.

Without it, a CNAPP still surfaces findings the same way disconnected tools do. Individually scored and queued, with no visibility into how they relate. Taken individually, most findings don't look critical. And that's exactly the problem.

Attackers don't operate within the boundaries of individual findings. They chain them together.

For example: A misconfiguration that seems low priority in isolation becomes critical when it's connected to an identity with excessive permissions — and that identity has access to a workload exposed to the internet. That's not three separate medium-severity findings. It’s an exploitable path to a serious breach.

A CNAPP with attack path analysis sees that chain. One without it doesn't.

The underlying mechanism is a graph model.

Cloud resources (workloads, identities, network configurations, data stores) are nodes. The relationships between them are edges.

Here's what that looks like in practice: An IAM role attached to a container → that container exposed to a public endpoint → that endpoint running a vulnerable package. Each connection is an edge. The full chain is only visible when those edges are mapped.

That chain changes the priority of everything in it. Individually, the IAM role, exposed endpoint, and vulnerable package look unremarkable. Together they form a critical path. Remove one link and the risk profile changes entirely.

The result:

Attack path analysis shifts the question from "what's wrong?" to "what's exploitable?" That's the distinction that determines where remediation effort actually has an impact.

What separates a CNAPP that works from one that doesn't?

Not every CNAPP delivers equally. A few patterns tend to separate the ones that work from the ones that don't.

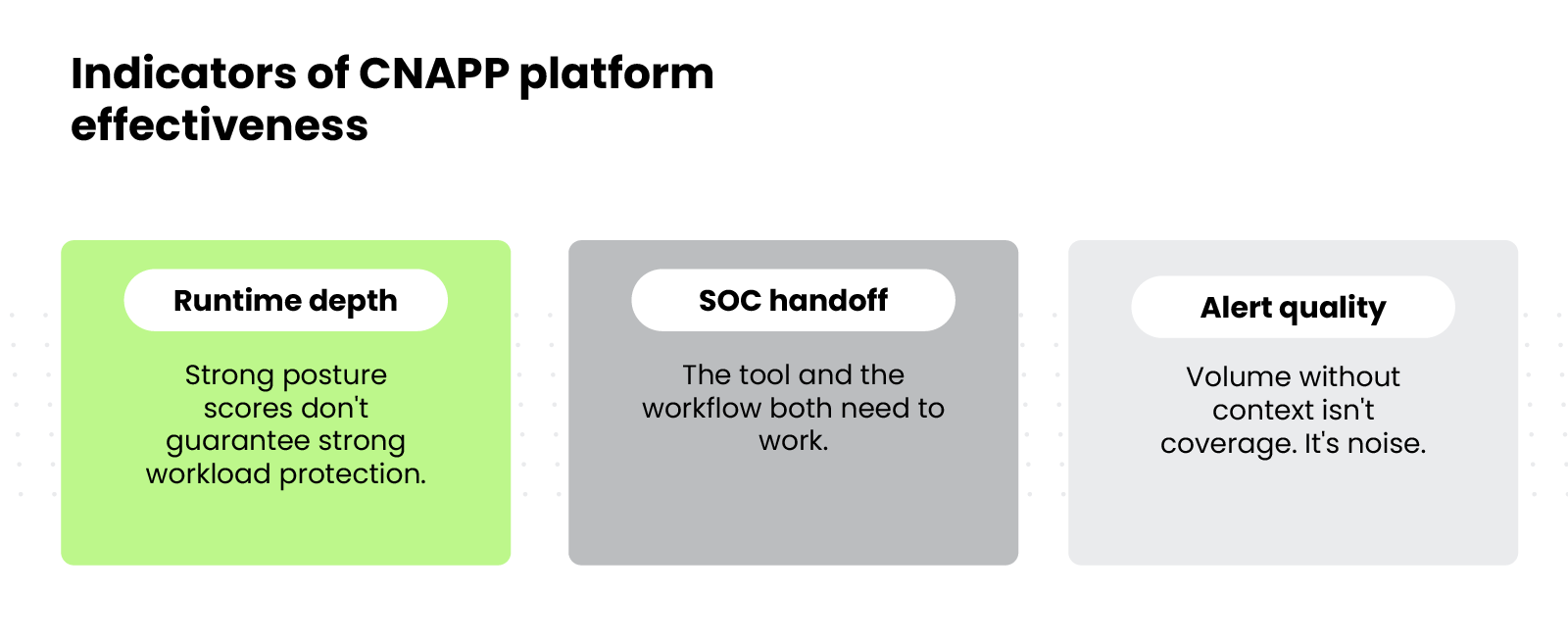

- Runtime depth

Most platforms perform reasonably well on posture. Misconfiguration detection, compliance reporting, and configuration drift are relatively mature capabilities.

Where platforms diverge is on workload security and runtime depth. Practitioner data reflects consistent dissatisfaction with workload protection capabilities even among teams satisfied with their posture tooling.

A strong CSPM score doesn't mean strong CNAPP performance.

- Alert quality

A platform that produces hundreds of daily alerts without runtime context to prioritize them isn't protecting the environment. It's creating work.

Without the context to distinguish what's actively exploitable from what's theoretically misconfigured, teams end up triaging noise rather than responding to risk.

- The SOC handoff

In most organizations, the cloud security team owns the CNAPP. The security operations center responds to runtime alerts.

If the platform doesn't connect posture findings to runtime events with shared context and clear ownership, alerts arrive in the SOC without the background needed to act on them. The tool works. The workflow doesn't.

The practical question isn't whether a platform does everything well. It's whether the capabilities that matter most are genuinely integrated and whether the output reduces response time rather than extends it.

How is CNAPP evolving?

CNAPP started as a consolidation story. One platform to replace the fragmented tooling that came before it. That part hasn't changed.

What's changing is everything around it.

The teams using CNAPP are diverging. Cloud security, application security, DevOps, and security operations each carry partial responsibility for cloud-native security. And each brings different priorities to the table.

A platform that works for one team may create friction for another. That tension is reshaping how CNAPP is built, bought, and deployed.

The scope is expanding too. Application security is increasingly part of the same platform conversation. What was once a separate procurement decision is becoming part of the core CNAPP evaluation.

Plus, AI is introducing an entirely new dimension (more on that below). Models, inference pipelines, and AI-connected services are expanding the attack surface in ways that existing controls weren't designed to address.

What does a strong CNAPP program look like in practice?

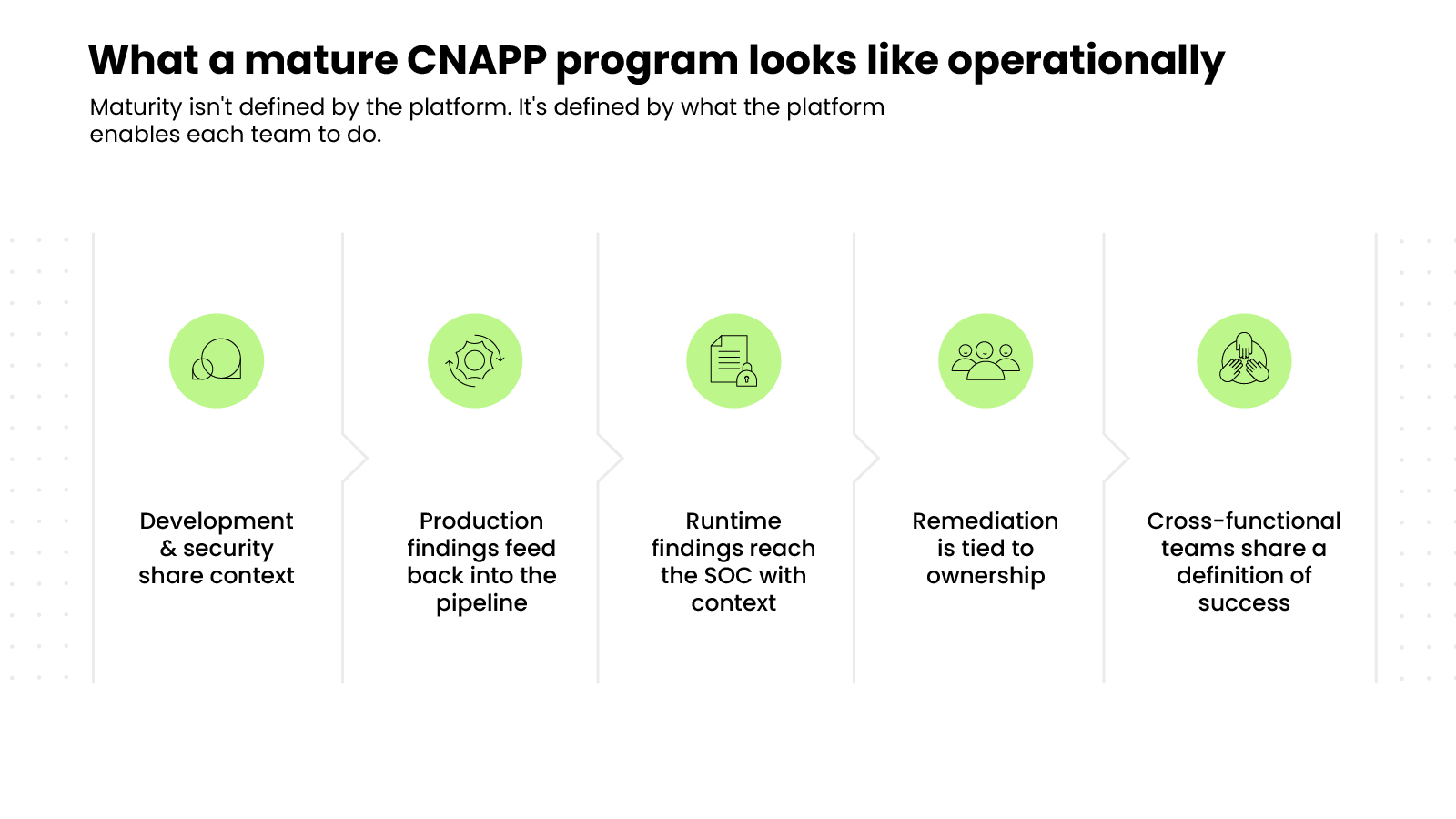

A mature CNAPP program isn't defined by which platform a team uses. It's defined by what that platform enables the team to actually do.

The clearest signal of maturity is prioritization quality.

A program that's working produces a short, high-confidence list of risks that require immediate attention. One that isn't working produces a long backlog that grows faster than it shrinks.

The volume of findings isn't the measure. The ratio of actionable findings to noise is.

Here's what that looks like operationally:

Ultimately, the platform is the foundation. The program is what's built on top of it.

How is AI impacting CNAPP?

AI is affecting CNAPP from two directions simultaneously: attack surface and the platform itself.

First, it's expanding the attack surface.

As organizations deploy AI models, build inference pipelines, and connect AI-powered services to production systems, those assets introduce security risks that existing controls weren't designed to address.

AI posture management has emerged as one of the most requested capabilities among cloud security practitioners, reflecting how quickly AI workloads have become a meaningful part of the cloud attack surface.

Second, AI is being embedded into CNAPP platforms themselves.

AI capabilities now power threat triage, investigation, and remediation guidance inside the platform. The most advanced implementations don't just surface findings. They correlate signals across the environment, identify likely attack paths, and suggest the fastest route to resolution.

%201.svg)