Falco Feeds extends the power of Falco by giving open source-focused companies access to expert-written rules that are continuously updated as new threats are discovered.

Extortion can simply be defined as "the practice of obtaining benefit through coercion." Data and cloud extortion schemes occur when precious data and/or access is stolen by an attacker that promises to restore it through payment or other demands.

In this article, we'll cover some common or uncommon extortion schemes, and highlight ways to detect and avoid falling prey to demands.

Foothold

First, an attacker needs to gain a foothold in an environment. This can be done in a variety of ways.

- Vulnerabilities, such as Log4Shell or Spring4Shell, are often exploited to gain access.

- Access keys and other tokens stored in source code.

- Social Engineering to grant access or disclose access credentials and keys.

- Malware that has infected a workstation can find stored credentials.

Simple user access within the environment may be sufficient to launch the attack. An attacker may need to further escalate privileges.

Amazon Web Services (AWS)

In AWS environments, Amazon Simple Storage Service (S3) buckets can have file encryption on a per-bucket or per-file basis. For encryption, you can use the AWS-provided key, your own custom key, or a key from another account. Generally, encryption can be changed at any time.

An attacker with access to a compromised account can take advantage of using encryption keys from another account to encrypt and lock out access to files on the account.

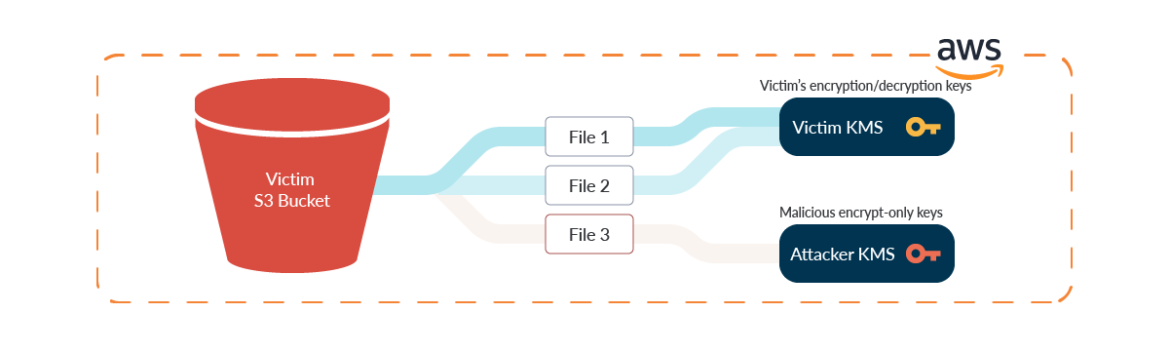

First, the attacker creates a custom key on their AWS account. This may be from a disposable account for this sole purpose, or another compromised account to launch attacks from.

The attacker specifies which victim accounts to allow access to their malicious key. When describing the policy on the key, the attacker will remove kms:Decrypt and kms:DescribeKey from the policy. This will prevent the victim from being able to use the key to access their files, get information on the key, and remove their key from their files.

An attacker's key policy might look like:

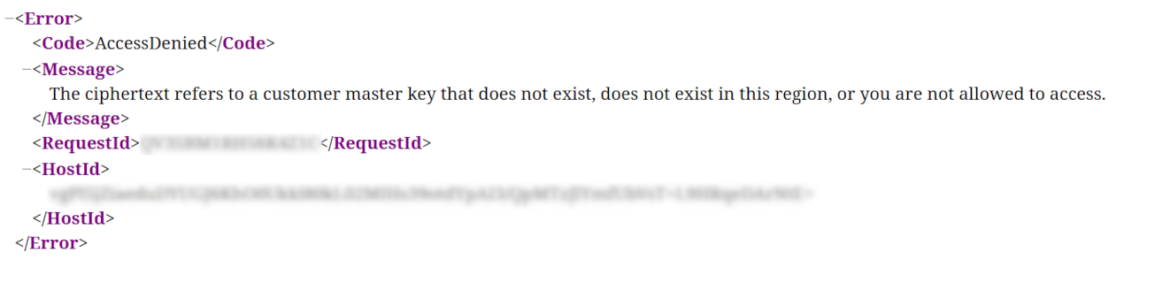

Victims attempting to access their files will receive an "Access Denied" message when access has been locked out:

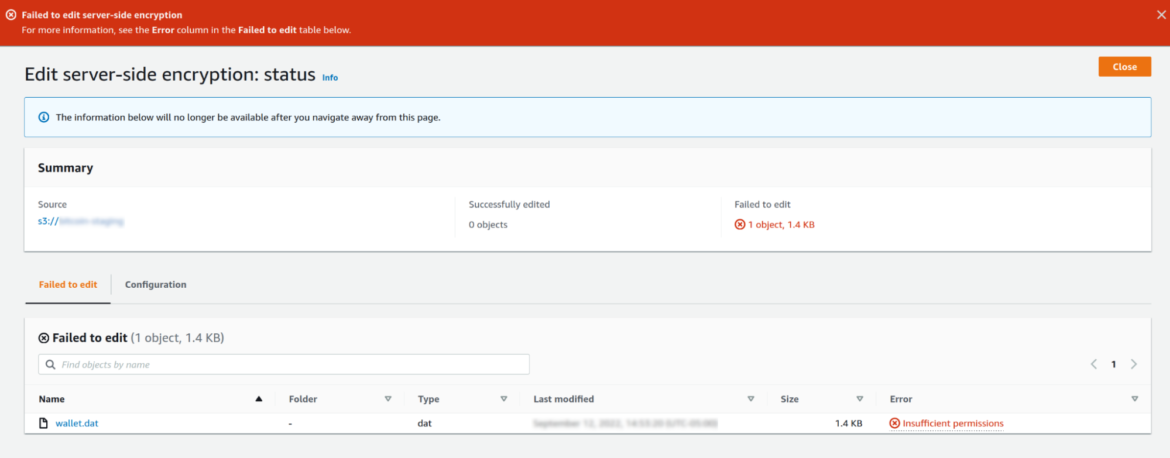

Attempts to remove foreign keys from locked files can result in errors:

Bucket versioning can help in this situation. Versioning is a feature of cloud storage to retain older copies of files and allow for rollback. By rolling back to a previous version of a file, account owners may be able to recover access to their files.

Detect extortion in AWS Storage

A smart attacker will want to disable bucket versioning and delete old copies of files. A Falco rule can alert account owners of attempts to disable bucket versioning, and such alerts should not be treated lightly. Rule "AWS S3 Versioning Disabled" will detect such attempts.

To finally remove access to the victim's previous keys, the attacker can schedule the deletion of the keys. Keys cannot be deleted immediately. Rather, there is a minimum seven day waiting period before a key is finally destroyed.

Account owners using Falco can be alerted with rule "Schedule Key Deletion" when keys are scheduled for deletion and take immediate action to remedy the situation.

Once the encryption has been changed, rollback copies are deleted, and original keys are destroyed, the victim is subject to demands from an attacker to restore access to their data.

If you want to know more, check out the 26 AWS security best practices to adopt in production.

Google Cloud Platform (GCP)

Unlike AWS, GCP is not susceptible to the same encryption key switching extortion technique. Encryption is mandatory at the time of bucket creation and can be changed later, but not in a decrypted state or with a key from another account.

GCP users can use a Google-managed key or supply their own key. Keys apply to all files on the bucket uniformly, so there's no exposure of per-file keys being changed.

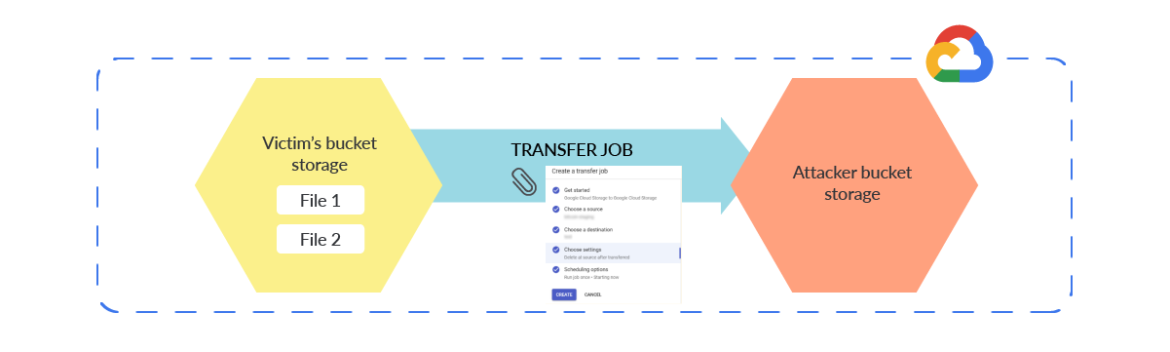

Rather than using the key switching scheme described for AWS, attackers could use a slower method to lock out access. They need to move the files out of the bucket, delete old copies, and optionally upload encrypted versions of the files as placeholders. Attackers can manually download the files or create a Transfer Job to do the work for them.

A Transfer Job is a function in GCP to copy data from one bucket to another, while optionally deleting the source files in the process. This can be used as a fast method for the attacker to steal data away from a victim. Once the files are transferred out and no longer available, the victim is subject to the whims of an attacker's demands to get the data back.

Detect extortion in GCP Storage

Unfortunately for GCP users, the only audit log message of a Transfer Job being initiated is a storage.setIamPermissions method call to grant the storage-transfer-service.iam.gserviceaccount.com service account with roles/storage.objectAdmin permission to perform the transfer. It is vague and easily missed by watchful eyes.

A Falco rule "GCP Set Bucket IAM Policy" can detect this and similar policy changes to buckets.

Similar to AWS, GCP also implements bucket versioning for the entire bucket. If bucket versioning is disabled, files will retain prior versions but will not create new ones.

Unfortunately for account owners, when Object Versioning is disabled or file versions are deleted, GCP's logging messages simply note an "unspecified bucket change" message. This makes it difficult to be notified of potentially hazardous changes. If a file is deleted by an attacker, all previous versions of the file would also be deleted after some time.

Find more tips in 24 Google Cloud Platform (GCP) security best practices using open source code.

Microsoft Azure

Azure offers "Storage Accounts" for file storage needs. A Storage Account (bucket) has blobs (files) of data that are stored in containers (directories). The terminology is a bit different, but it operates much the same as AWS and GCP.

Azure does enable blob encryption by default with a key provided by Microsoft. Like GCP, Azure does not allow for the use of keys from foreign accounts.

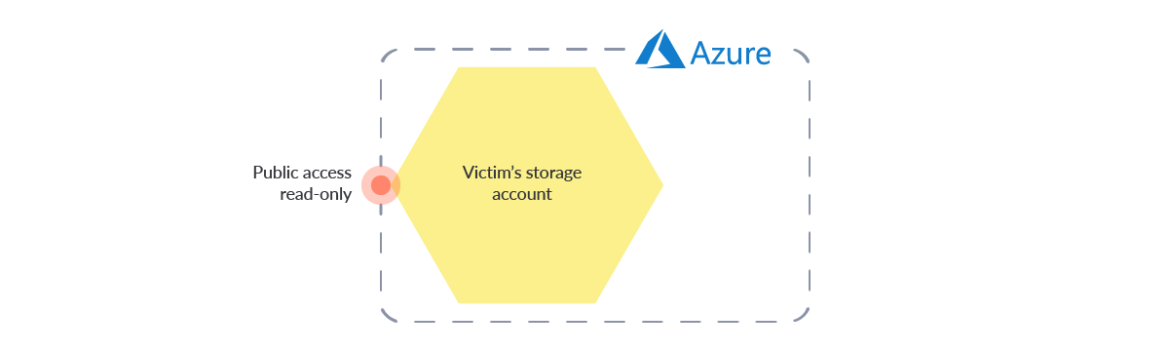

Features like soft delete and file versioning can be used to help safeguard against attacks as described for AWS and GCP. However, Azure offers a feature that is not a benefit: storage accounts are publicly accessible with read access by default. This can unknowingly open Azure customers to data exposure.

Publicly accessible blobs can leak credentials, sensitive information like Personal Identifiable Information (PII), or other data that should remain private. Leaked information can be valuable to attackers simply by extorting the original owners to not further leak the information. Tools like MicroBurst by NetSPI can be used to scan and search for storage accounts open to anonymous access.

Detect extortion in Azure Storage

Falco users can use the rule "Azure Access Level for Blob Container Set to Public" to alert on changes to storage account permissions.

Public read access is enabled by default and should be disabled with the web interface or with the Azure command line interface (CLI). Here is how to use the Azure CLI to disable public access:

Summary

Understanding your cloud environment's features is a key component in applying an effective security policy.

Extortion of cloud storage can easily be a greater cost than the cost of implementing a proper security posture. Keeping a watchful eye on your environment allows you to effectively respond to security events before they spiral out of control.

Combining a solution that ensures you are Cloud Security Posture management (CSPM) compliant with real-time detection that avoids wasting time reacting will make you prepared for any scenario.

Sysdig can help sift through long cloud logs to alert on potential security events as soon as possible, without the necessity of storing data lakes with huge costs.

In addition, Sysdig unifies cloud security posture management (CSPM) and cloud threat detection.

Learn more in the following articles: