Falco Feeds extends the power of Falco by giving open source-focused companies access to expert-written rules that are continuously updated as new threats are discovered.

While auditing the Kubernetes source code, I recently discovered an issue (CVE-2020-8566) in Kubernetes that may cause sensitive data leakage.

You would be affected by CVE-2020-8566 if you created a Kubernetes cluster using ceph cluster as storage class, with logging level set to four or above in kube-controller-manager. In that case, your ceph user credentials will be leaked in the kube-controller-manager‘s log. Anyone with access to this log will be able to read them and impersonate your ceph user, compromising your stored data.

In this article, you’ll understand this issue, what parts of Kubernetes are affected, and how to mitigate it.

Preliminary

Let’s start by listing the Kubernetes components that either cause, or are impacted by the issue.

- Kube-controller-manager: The Kubernetes controller manager is a combination of the core controllers that watch for state updates, and make changes to the cluster accordingly. The controllers that currently ship with Kubernetes include:

- Replication controller: Maintains the correct number of pods on the system, for replicating controller objects.

- Node controller: Monitors the changes of the nodes.

- Endpoints controller: Populates the endpoint object, which is responsible for joining the service object and pod object.

- Service accounts and token controller: Manages service accounts and tokens for namespaces.

- Secret: Kubernetes Secrets let you store and manage sensitive information, such as passwords, OAuth tokens, and ssh keys. Storing confidential information in a Secret is safer and more flexible than putting it verbatim in a Pod definition or in a container image.

- StorageClass: A StorageClass provides a way for administrators to describe the “classes” of storage they offer. Different classes might map to quality-of-service levels, backup policies, or to arbitrary policies determined by the cluster administrators.

- PersistentVolumeClaim: A PersistentVolumeClaim (PVC) is a request for storage by a user. It is similar to a Pod. Pods consume node resources and PVCs consume PV resources. Pods can request specific levels of resources (CPU and Memory). Claims can request specific size and access modes.

The CVE-2020-8566 issue

The issue with CVE-2020-8563 is as follows: If you have a Kubernetes cluster using a ceph cluster as storage and kube-controller-manager logging level is set to four or above, then when a PVC is created referencing the ceph storage class, the vSphere credentials will be logged by kube-controller-manager.

The recording below demonstrates how to obtain the leaked ceph cluster admin credentials:

The impact of CVE-2020-8566

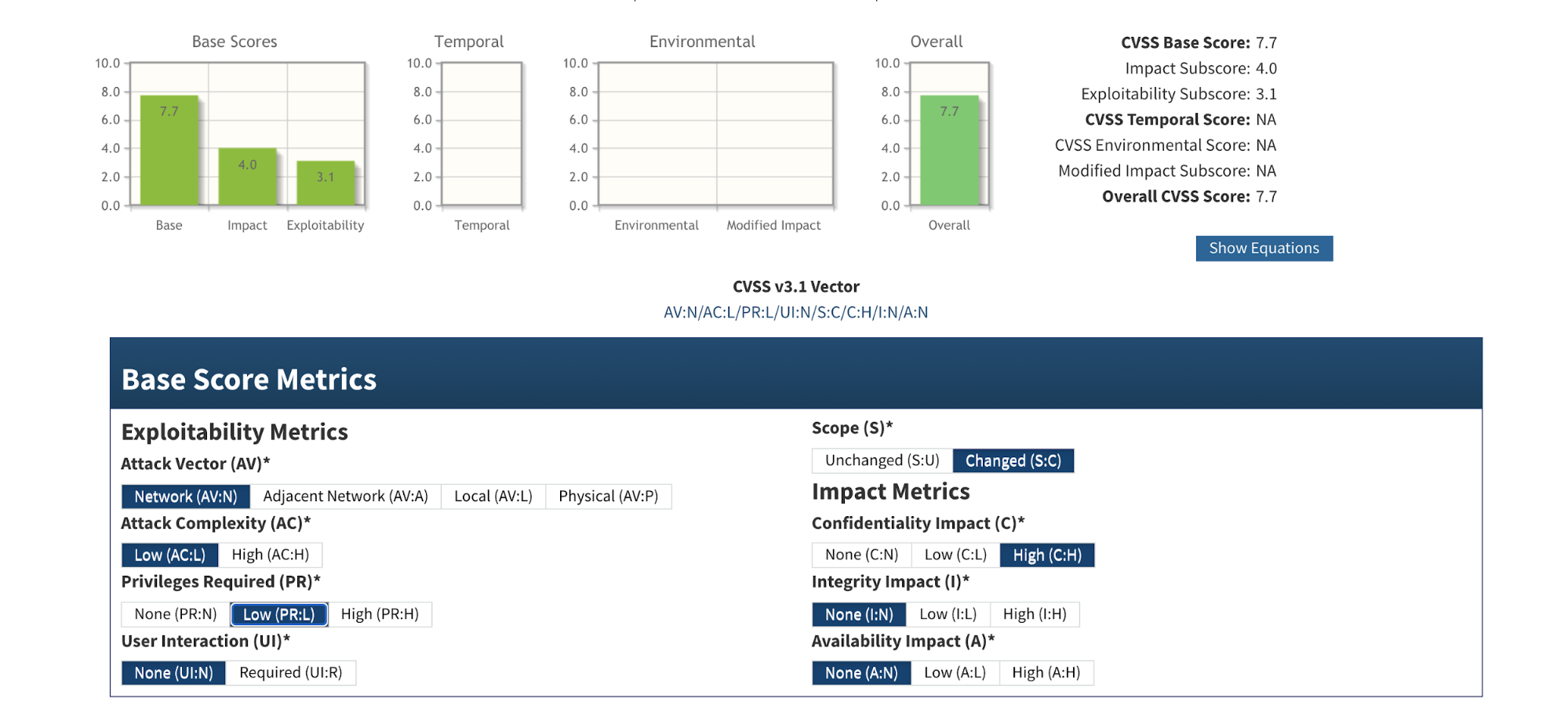

According to the CVSS system, it scores 5.3 as medium severity.

This takes into account that, in order to make the secret leak happen, the attacker needs to find a way to set the log level of kube-controller-manager to four or above. As the exploit requires privileges to access to the node where the component resides, the severity is just medium.

However, what if the log level of kube-controller-manager was set to four or above in the beginning? For example, perhaps someone increased log verbosity (via kubeadm) to assist a troubleshoot.

Then, the severity of this CVE score increases to 7.7 as high severity:

In this case, there’s no need for special access. Everyone with access to the kube-provider-controller’s log is able to view the secrets.

People who have access to the kube-provider-controller’s log are able to view the secrets in the log.

To summarize, the impacted Kubernetes clusters have the following configurations:

- Kubernetes clusters use a ceph cluster for storage.

- The logging level of kube-controller-manager is set to four or above.

- At least one PVC has been created referencing the storage class from the ceph cluster.

Overall, the secret stored clear text in the log archive is dangerous as the secret could then be exposed to any users or applications who have access to the log archives. People who have access to the admin credentials can act on behalf of the ceph cluster admin user.

Mitigating CVE-2020-8566

If you’re impacted by this CVE, you should update your ceph admin password immediately. Expired credentials can’t be used to access your ceph storage cluster.

Even if the log level of kube-controller-manager was set to its default value, you should check for Kubernetes components like kube-apiserver and kube-controller-manager that may be starting with a verbose log.

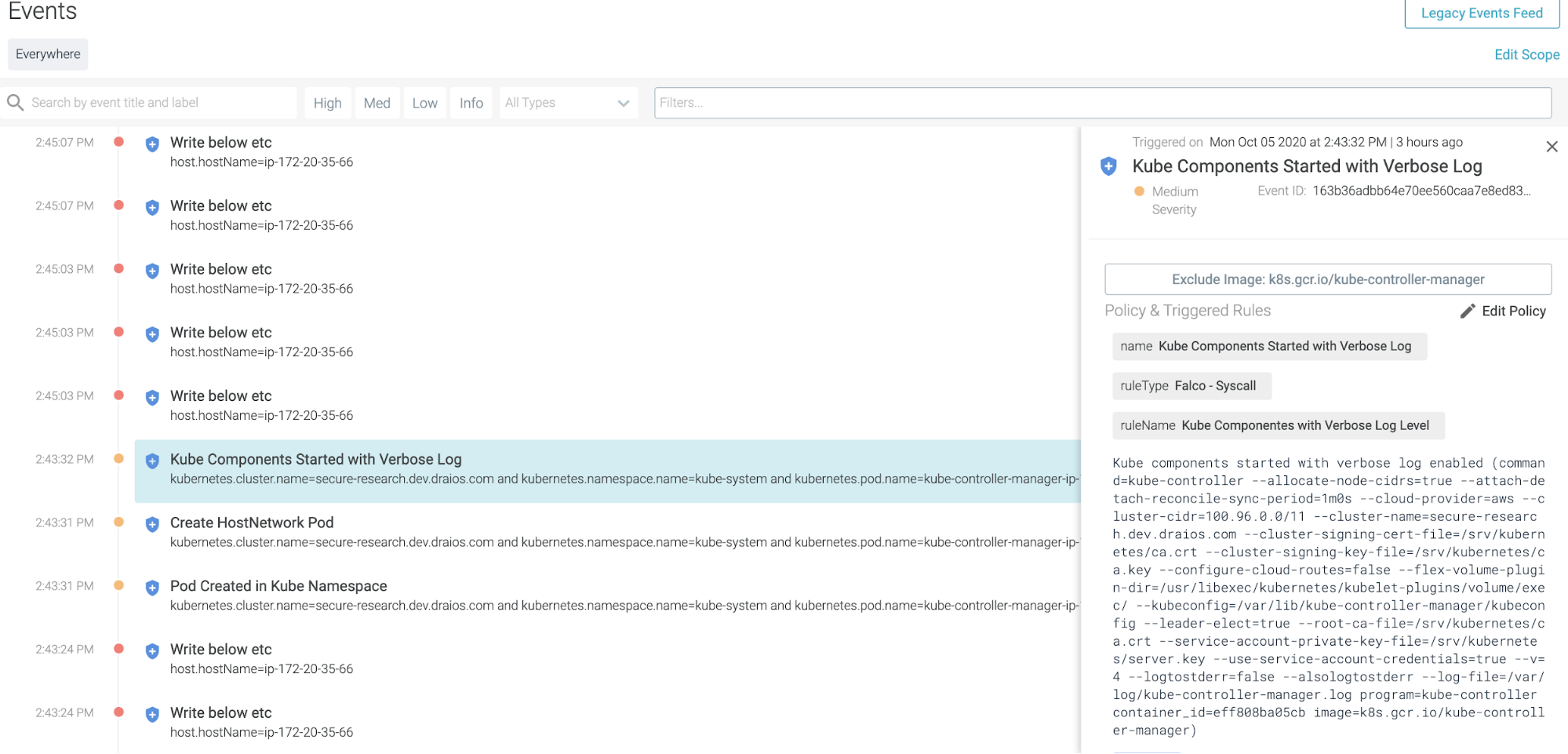

Falco, a CNCF incubating project, can help detect anomalous activities in cloud native environments. The following falco rule can help you detect if you are impacted by CVE-2020-8566:

This rule will detect Kubernetes components starting with a verbose log.

The security event output will look like this in Sysdig Secure (which uses Falco underneath):

Conclusion

You might be following the best practices to harden the kube-apiserver, as it is the brain of the Kubernetes cluster, and also etcd, as it stores all of the critical information about the Kubernetes cluster. However, if there is one thing CVE-2020-8566 teaches us, it’s that we shouldn’t stop there as we need to secure every Kubernetes component.

Sysdig Secure can help you benchmark your Kubernetes cluster and check whether it’s compliant with security standards like PCI or NIST. Try it today!