Falco Feeds extends the power of Falco by giving open source-focused companies access to expert-written rules that are continuously updated as new threats are discovered.

Infrastructure as Code (IaC) is a powerful mechanism to manage your infrastructure, but with great power comes great responsibility. If your IaC files have security problems (for example, a misconfigured permission because of a typo), this will be propagated along your CI/CD pipeline until it is hopefully discovered at runtime, where most of the security issues are scanned or found. What if you can fix potential security issues in your infrastructure at the source?

What is Infrastructure as Code?

IaC is a methodology of treating the building blocks of your infrastructure (virtual machines, networking, containers, etc.) as code using different techniques and tools. This means instead of manually creating your infrastructure, such as VMs, containers, networks, or storage, via your favorite infrastructure provider web interface, you define them as code and then those are created/updated/managed by the tools you choose (terraform, crossplane, pulumi, etc.).

The benefits are huge. You can manage your infrastructure as if it was code (it _is_ code now) and leverage your development best practices (automation, testing, traceability, versioning control, etc.) to your infrastructure assets. There is tons of information out there around this topic, but the following resource is a good starting point.

Why is securing your Infrastructure as Code assets important as an additional security layer?

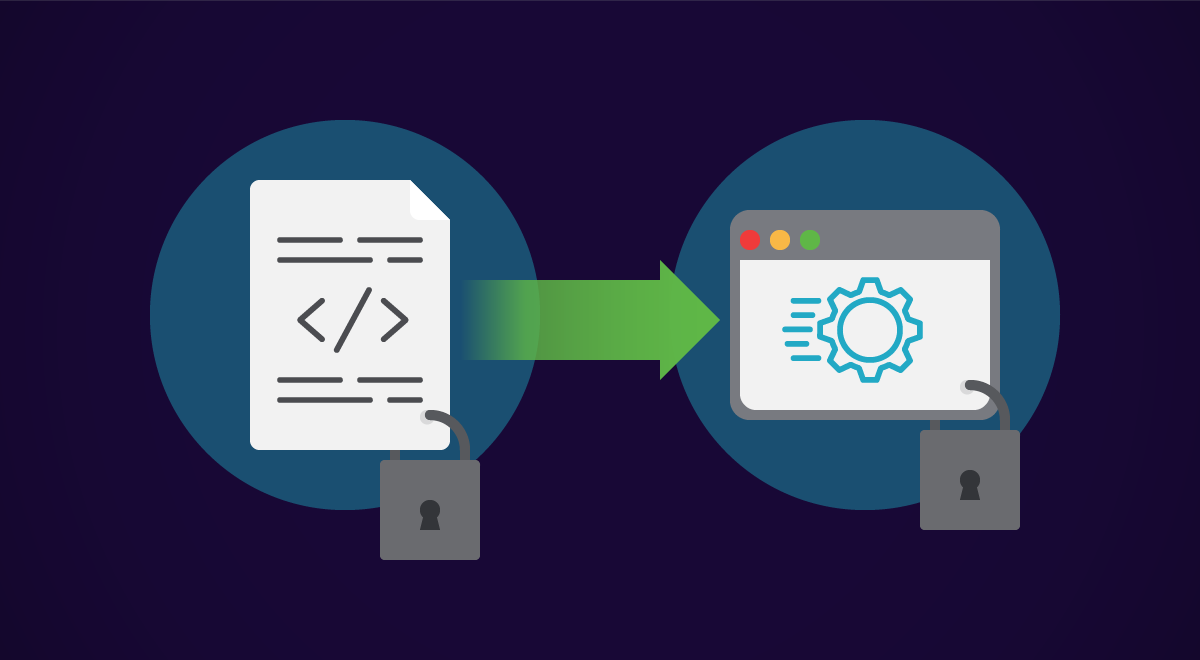

Most security tools detect potential vulnerabilities and issues at runtime, which is too late. In order to fix them, either a reactive manual process needs to be performed (for example, directly modifying a parameter in your k8s object with kubectl edit) or ideally, the fix will happen at source and then it will be propagated all along your supply chain. This is what is called "Shift Security Left." Move from fixing the problem when it is too late to fixing it before it happens. This principle is at the core of the Cloud Native Application Protection Platform (CNAPP) concept.

According to Red Hat's "2022 state of Kubernetes security report," 57% of respondents worry the most about the runtime phase of the container life cycle. But wouldn't it be better if those potential issues can be discovered directly into the code definition instead?

Introducing Sysdig Git Infrastructure as Code Scanning

Based on the current "CIS Kubernetes" and "Sysdig K8s Best Practices" benchmarks, Sysdig Secure scans you Infrastructure as Code manifests at the source. Currently, it supports scanning YAML, Kustomize, Helm. or Terraform files representing Kubernetes workloads (stay tuned for future releases), and it integrates seamlessly with your development workflow by showing potential issues directly in the pull requests on the repositories hosted in GitHub, GitLab, Bitbucket, or Azure DevOps. See more information in the official documentation.

As a proof of concept, let's see it in action in a small EKS cluster using the example guestbook application as our "Infrastructure as Code," where we will also apply GitOps practices to manage our application lifecycle with ArgoCD.

This is a proof of concept of a GitOps integration with Sysdig IaC scanning. The versions used on this PoC are ArgoCD 2.4.0, Sysdig Agent 12.8.0, and Sydig Charts v1.0.3.

NOTE: Want to know more about GitOps? See How to apply security at the source using GitOps.

Preparations

This is how our EKS cluster (created as "eksctl create cluster -n edu --region eu-central-1 --node-type m4.xlarge --nodes 2")

looks like:

❯ kubectl get nodes

NAME STATUS ROLES AGE VERSION

ip-10-0-2-210.eu-central-1.compute.internal Ready 108s v1.20.15-eks-99076b2

ip-10-0-3-124.eu-central-1.compute.internal Ready 2m4s v1.20.15-eks-99076b2

Installing Argo CD is as easy as following the instructions in the official documentation:

❯ kubectl create namespace argocd

❯ kubectl apply -n argocd -f https://raw.githubusercontent.com/argoproj/argo-cd/v2.4.0/manifests/install.yaml

With Argo in place, let's create our example application. We will leverage the example guestbook Argo CD application already available at https://github.com/argoproj/argocd-example-apps.git by creating our own fork directly at GitHub:

Or, using the GitHub cli tool:

❯ cd ~/git

❯ gh repo fork https://github.com/argoproj/argocd-example-apps.git --clone

✓ Created fork e-minguez/argocd-example-apps

Cloning into 'argocd-example-apps'...

...

From github.com:argoproj/argocd-example-apps

* [new branch] master -> upstream/master

✓ Cloned fork

Now, configure Argo CD to deploy our application in our k8s cluster via the web interface. To access the Argo CD web interface, we are required to get the password (it is randomized at installation time) as well as make it externally available. In this example a port-forward is used:

❯ kubectl -n argocd get secret argocd-initial-admin-secret -o jsonpath="{.data.password}" | base64 -d; echo

s8ZzBlGRSnPbzmtr

❯ kubectl port-forward svc/argocd-server -n argocd 8080:443 &

Then, we can access the Argo CD UI using a web browser pointing to http://localhost:8080 :

Or, using Kubernetes objects directly:

❯ cat << EOF | kubectl apply -f -

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: my-example-app

namespace: argocd

spec:

destination:

namespace: my-example-app

server: https://kubernetes.default.svc

project: default

source:

path: guestbook/

repoURL: https://github.com/e-minguez/argocd-example-apps.git

targetRevision: HEAD

syncPolicy:

automated: {}

syncOptions:

- CreateNamespace=true

EOF

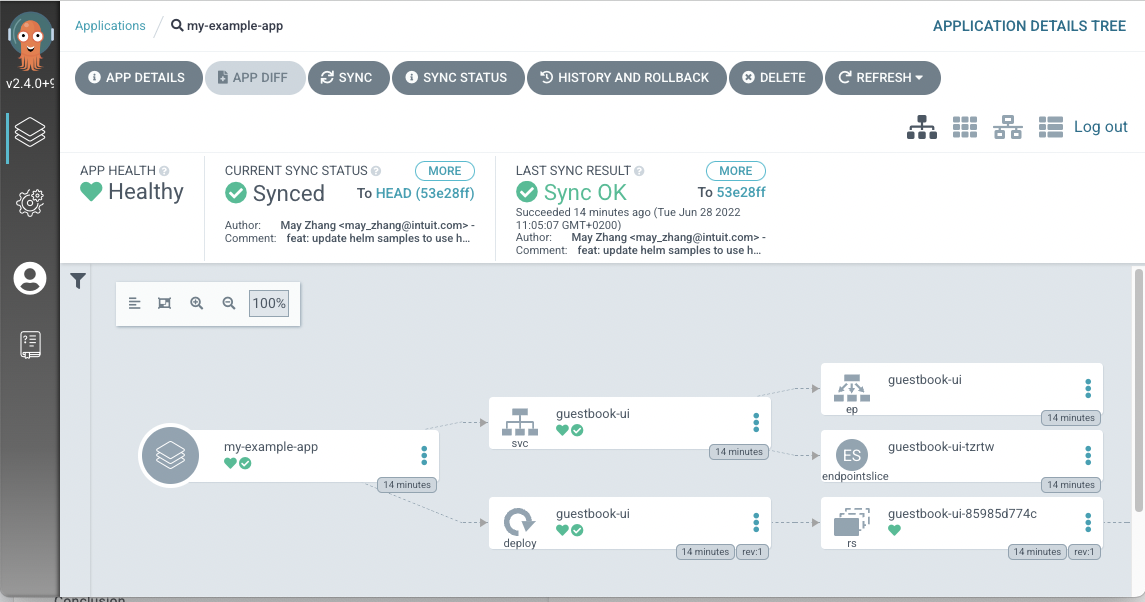

After a few moments, Argo will deploy your application from your git repository, including all the objects:

❯ kubectl get all -n my-example-app

NAME READY STATUS RESTARTS AGE

pod/guestbook-ui-85985d774c-n7dzw 1/1 Running 0 14m

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/guestbook-ui ClusterIP 172.20.217.82 80/TCP 14m

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/guestbook-ui 1/1 1 1 14m

NAME DESIRED CURRENT READY AGE

replicaset.apps/guestbook-ui-85985d774c 1 1 1 14m

Success!

The application defined as code is already running in the Kubernetes cluster and the deployment has been automated using the GitOps practices. However, we didn't take into consideration any security aspect of it. Is the definition of my application secure enough? Did we miss anything? Let's see what we can find out.

Configuring Sysdig Secure to scan our new shiny repository is as easy as adding a new git repository integration:

Pull request policy evaluation

Now, let's see it in action. Create a pull request with some code changes, for example to increase the number of replicas from 1 to 2:

Or, by using the cli:

❯ git switch -c my-first-pr

Switched to a new branch 'my-first-pr'

❯ sed -i -e 's/ replicas: 1/ replicas: 2/g' guestbook/guestbook-ui-deployment.yaml

❯ git add guestbook/guestbook-ui-deployment.yaml

❯ git commit -m 'Added more replicas'

[my-first-pr c67695e] Added more replicas

1 file changed, 1 insertion(+), 1 deletion(-)

❯ git push

Enumerating objects: 7, done.

…

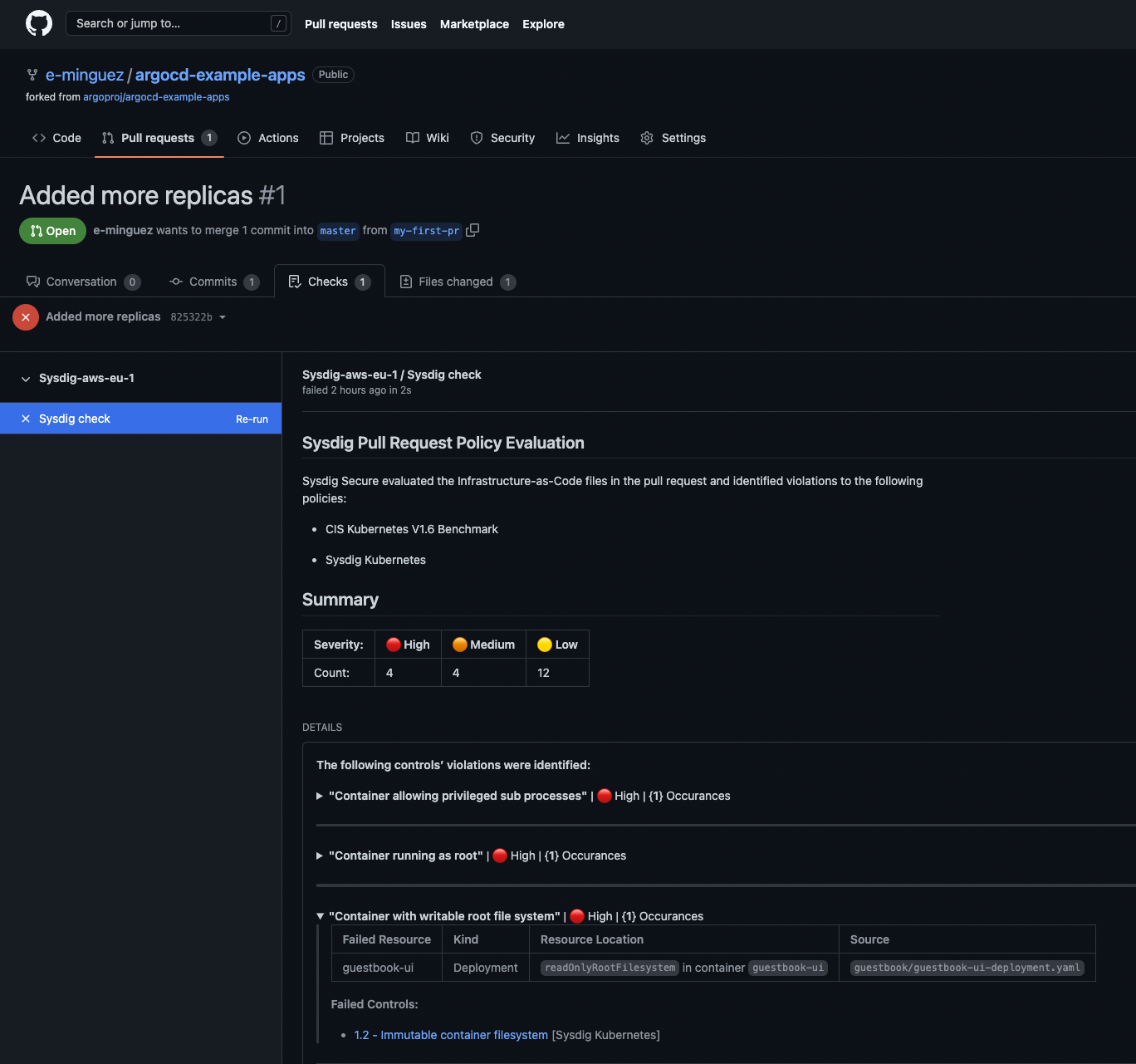

Almost immediately, Sysdig Secure will perform a scan of the repository folder and will notify potential issues:

Here, you can see some potential issues based on the CIS Kubernetes V1.6 benchmark as well as the Sysdig Kubernetes best practices ordered by severity. An example is the "Container with writable root file system" one located in the deployment file of our example application.

You can apply those recommendations by modifying your source code, but why don't we make Sysdig Secure do it for you?

Remediating the issues at source automagically

Let's deploy the Sysdig Secure agent via helm in our cluster so it can inspect our objects running, including a couple of new flags to enable the KSPM features.

❯ helm repo add sysdig https://charts.sysdig.com

❯ helm repo update

❯ export SYSDIG_ACCESS_KEY="XXX"

❯ export SAAS_REGION="eu1"

❯ export CLUSTER_NAME="mycluster"

❯ export COLLECTOR_ENDPOINT="ingest-eu1.app.sysdig.com"

❯ export API_ENDPOINT="eu1.app.sysdig.com"

❯ helm install sysdig sysdig/sysdig-deploy

--namespace sysdig-agent

--create-namespace

--set global.sysdig.accessKey=${SYSDIG_ACCESS_KEY}

--set global.sysdig.region=${SAAS_REGION}

--set global.clusterConfig.name=${CLUSTER_NAME}

--set agent.sysdig.settings.collector=${COLLECTOR_ENDPOINT}

--set nodeAnalyzer.nodeAnalyzer.apiEndpoint=${API_ENDPOINT}

--set global.kspm.deploy=true

# after a few moments

❯ kubectl get po -n sysdig-agent

NAME READY STATUS RESTARTS AGE

nodeanalyzer-node-analyzer-bw5t5 4/4 Running 0 9m14s

nodeanalyzer-node-analyzer-ccs8d 4/4 Running 0 9m5s

sysdig-agent-8sshw 1/1 Running 0 5m4s

sysdig-agent-smm4c 1/1 Running 0 9m16s

sysdig-kspmcollector-5f65cb87bb-fs78l 1/1 Running 0 9m22s

As an exercise for the reader, this step can be achieved the GitOps way using Argo CD with the helm chart

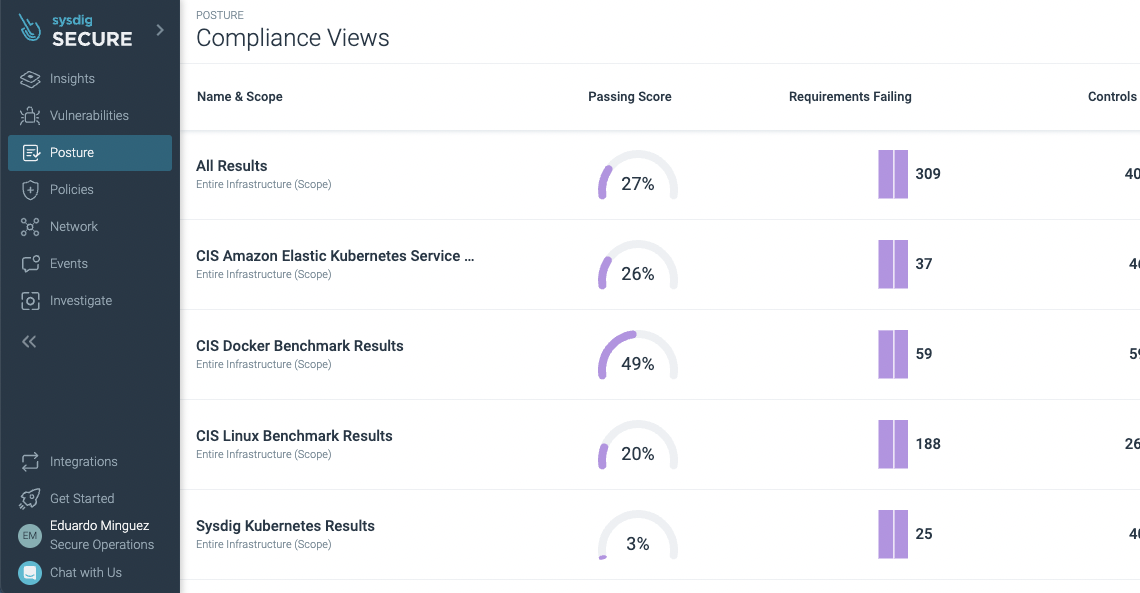

After a few minutes, the agent is deployed and has reported back the Kubernetes status in the new Posture -> "Actionable Compliance" section where the security requirements can be observed:

Let's fix the "Container Image Pull" policy control (see the official documentation for the detailed list of policy controls available).

There, you can see the remediation proposal, a Kubernetes patch, and a "Setup Pull Request" section. But will it?

Indeed! Sysdig Secure is now also able to compare the source and the runtime status of your Kubernetes objects and can even fix it for you, from source to run.

There's no need for complex operations or manual fixes that create snowflakes. Instead, fix it at the source.

Final thoughts

Adding IaC scanning, security, and compliance mechanisms to your toolbox will help your organization find and fix potential security issues directly at the source (shifting security left) of your supply chain. Sysdig Secure can even create the remediation directly for you!

Get started now with a free trial and see for yourself.